| Group | Event Name | Time |

|---|

| Wesley Chapel, Trinity, New Tampa, Business Professionals • Wesley Chapel, FL | Business Over Breakfast ~ Happy Hangar IN PERSON JOIN US! | Thu, May 25 · 7:30 AM EDT |

| Pasco County Young Entrepreneurs/Business Owners All Welcome • Wesley Chapel, FL | Happy Hangar Early Bird Professionals Networking | Thu, May 25 · 7:30 AM EDT |

| Tampa Bay Networking and Events • Largo, FL | LUTZ, FL – HAPPY HANGER CAFE THURSDAY NETWORKING | Thu, May 25 · 8:00 AM EDT |

| Agile Mindset Book Club (Tampa, FL) • Tampa, FL | The Wisdom of the Bullfrog: Leadership Made Simple (But Not Easy) Book Club | Thu, May 25 · 8:00 AM EDT |

| Entrepreneur Round Table • Dunedin, FL | 2023 Reset – Small Business SPARK! | Thu, May 25 · 8:30 AM EDT |

| Clearwater Entrepreneurs’ Round Table • Dunedin, FL | 2023 SPARK Your Small Business! | Thu, May 25 · 8:30 AM EDT |

| Tampa Business Referral Networking Group • Tampa, FL | Tampa Business Networking Group | Thu, May 25 · 8:30 AM EDT |

| TampaBayNetworkers • Pinellas Park, FL | Suncoast Networkers | Thu, May 25 · 8:30 AM EDT |

| USBH Business Networking • Port Richey, FL | USBH Business Networking | Thu, May 25 · 9:00 AM EDT |

| Florida Startup: Idea to IPO • Tampa, FL | How to Cut Product Development Costs by up to 50%! | Thu, May 25 · 11:00 AM EDT |

| Business Professionals of Pinellas, Pasco & Hillsborough • Largo, FL | Clearwater Professional Networking Lunch | Thu, May 25 · 11:00 AM EDT |

| Young Professionals Networking JOIN in and Connect! • Saint Petersburg, FL | The Founders Meeting where it all Began! JOIN us! Bring a guest and get a gift | Thu, May 25 · 11:00 AM EDT |

| Tampa Bay Networking and Events • Largo, FL | Pinellas County’s Largest Networking Lunch and your invited! | Thu, May 25 · 11:00 AM EDT |

| ManageEngine’s Cybersecurity Meetups • Orlando, FL | 7 security issues you can find with the help of SIEM | Thu, May 25 · 11:00 AM EDT |

| Orlando Unity Developers Group • Orlando, FL | Online: 12 Principles of Animation in 3D – Blender | Thu, May 25 · 11:00 AM EDT |

| Tampa / St Pete Business Connections • Tampa, FL | Clearwater/Central Pinellas Networking Lunch JOIN us and connect your business | Thu, May 25 · 11:00 AM EDT |

| Tampa Bay Business Networking Happy Hour/Meetings/Meet Up • Clearwater, FL | Pinellas County’s Largest Networking Lunch and your invited! | Thu, May 25 · 11:00 AM EDT |

| ClearlyAgile | Creating Actionable User Stories: Methods for Writing and Splitting with Purpose | Thu, May 25 · 11:30 AM EDT |

| Wesley Chapel, Trinity, New Tampa, Business Professionals • Wesley Chapel, FL | Wesley Chapel Professional Networking Lunch | Thu, May 25 · 11:30 AM EDT |

| Pasco County Young Entrepreneurs/Business Owners All Welcome • Wesley Chapel, FL | Lutz / Wesley Chapel Professional Networking Lunch at Bahama Breeze! | Thu, May 25 · 11:30 AM EDT |

| Professional Business Networking with RGAnetwork.net • Tampa, FL | Breakroom Bar & Grill 11:28 Doors open at 11:00am IN Person | Thu, May 25 · 11:30 AM EDT |

| Ironhack Tampa – Tech Careers, Learning and Networking • Tampa, FL | Using Technology to Break Barriers | Thu, May 25 · 12:00 PM EDT |

| Simply Referrals Tampa Business Networking • Tampa, FL | The Business Networking Midday Power Hour by Simply Referrals on Zoom! | Thu, May 25 · 12:00 PM EDT |

| Clearwater Business Networking • Clearwater, FL | The Business Networking Midday Power Hour by Simply Referrals on Zoom! | Thu, May 25 · 12:00 PM EDT |

| Tampa Bay Gaming: RPG’s, Board Games & more! • Tampa, FL | Commander Open Play Night at Armada Games | Thu, May 25 · 1:00 PM EDT |

| Network After Work Tampa – Networking Events • Tampa, FL | The Secrets of Getting Unlimited Business Credit | Thu, May 25 · 2:00 PM EDT |

| StartUp Xchange • Saint Petersburg, FL | Startup Strategy Office Hours | Thu, May 25 · 2:00 PM EDT |

| Meeple Movers Gaming Group • Ocala, FL | Pathfinder Society at Meeple Movers | Thu, May 25 · 2:00 PM EDT |

| Gulfport Scrabblers • Saint Petersburg, FL | Gulfport Scrabble and Social | Thu, May 25 · 5:00 PM EDT |

| Pinellas Tech Network • Palm Harbor, FL | The Future of Programming: Python & AI | Thu, May 25 · 6:00 PM EDT |

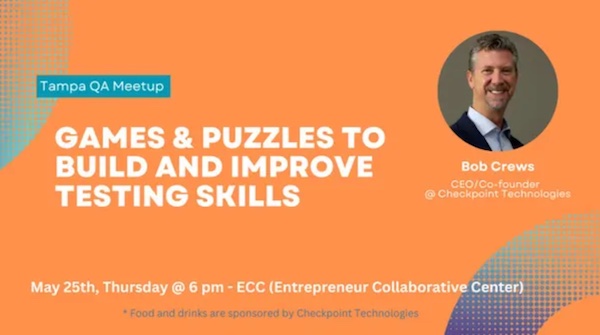

| Tampa Bay QA & Testing Meetup • Tampa, FL | 🎲 Games & Puzzles to Build and Improve Testing Skills | Thu, May 25 · 6:00 PM EDT |

| Ironhack Tampa – Tech Careers, Learning and Networking • Tampa, FL | The Basics of Web Development | Thu, May 25 · 6:00 PM EDT |

| Tampa Bay DevOps Meetup • Tampa, FL | Tampa Bay DevOps Meetup – May Event | Thu, May 25 · 6:00 PM EDT |

| Network After Work Tampa – Networking Events • Tampa, FL | Tampa at WXYZ Bar at Aloft Tampa Downtown | Thu, May 25 · 6:00 PM EDT |

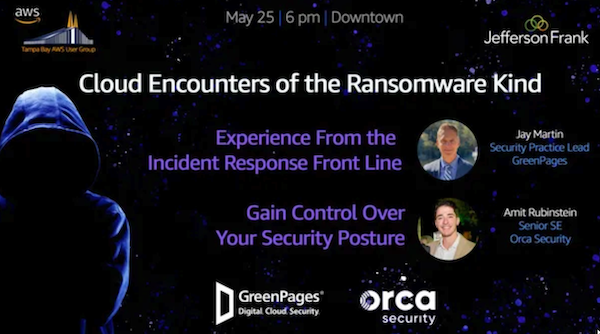

| Tampa Bay AWS User Group • Tampa, FL | Cloud Encounters of the Ransomware Kind – Incident Response Experiences | Thu, May 25 · 6:00 PM EDT |

| Bitcoin Key Club • Sarasota, FL | Monthly Meetup | Thu, May 25 · 6:00 PM EDT |

| Makerspaces Pinellas Meetup Group • Largo, FL | Basics Electronics Soldering | Thu, May 25 · 6:00 PM EDT |

| Brandon and Seffner area AD&D and OSR Group • Seffner, FL | 1st ed. AD&D Campaign. | Thu, May 25 · 6:00 PM EDT |

| Critical Hit Games • Saint Petersburg, FL | Pathfinder Society | Thu, May 25 · 6:00 PM EDT |

| Orlando Board Gaming Weekly Meetup • Orlando, FL | Central Florida Board Gaming at The Collective | Thu, May 25 · 6:00 PM EDT |

| Trainers Backpack Hobby Shop Small Groups • Oviedo, FL | Competitive Pokemon TCG Battling Group | Thu, May 25 · 6:00 PM EDT |

| Sarasota / Venice Photography Meetup Group • Venice, FL | Street & Architecture Photography Downtown Sarasota | Thu, May 25 · 6:00 PM EDT |

| Clearwater Boardgame Night • Saint Petersburg, FL | Clearwater Board Game Night | Thu, May 25 · 6:00 PM EDT |

| We Write Here Black and Women of Color Writing Group • Tampa, FL | Weekday Writing Get Down | Thu, May 25 · 6:00 PM EDT |

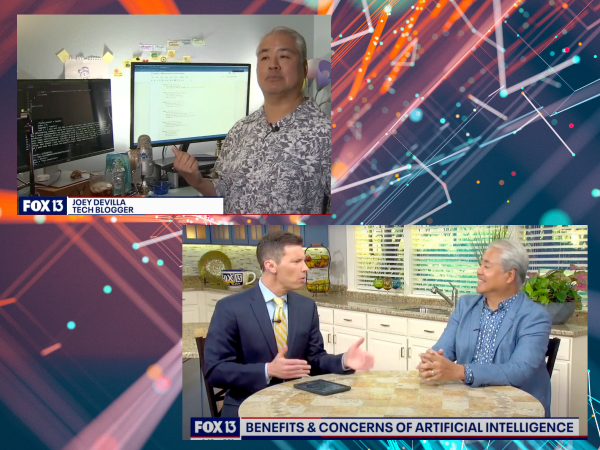

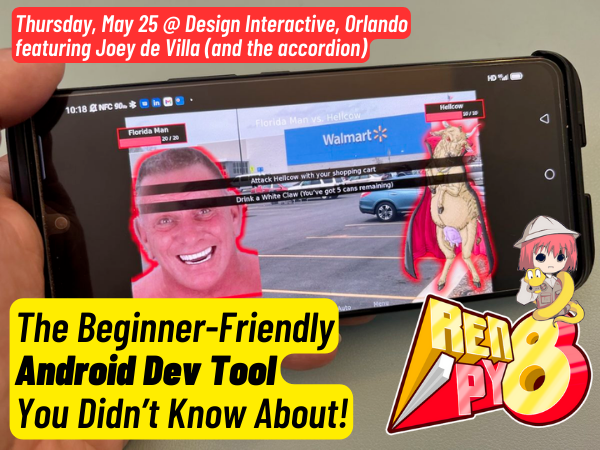

| Google Developer Group Central Florida • Orlando, FL | The Beginner-Friendly Android Dev Tool You didn’t know! by Joey deVilla! 🎹 | Thu, May 25 · 6:30 PM EDT |

| Young Entrepreneurs of America YEA Meetup Group • Tampa, FL | YEA: Young Entrepreneur Networking & Mixer | Thu, May 25 · 6:30 PM EDT |

| Our Photo Tribe Workshops • Tampa, FL | Digital Camera Basics – Online | Thu, May 25 · 6:30 PM EDT |

| Tampa Writers Alliance • Tampa, FL | Tampa Writers Alliance Poetry Group | Thu, May 25 · 6:30 PM EDT |

| Tampa Free Writing Group • Tampa, FL | Writing Meetup | Thu, May 25 · 6:30 PM EDT |

| Beginning Web Development • Windermere, FL | Weekly Meetup | Thu, May 25 · 7:00 PM EDT |

| Tampa Hackerspace • Tampa, FL | 3D Printing Guild | Thu, May 25 · 7:00 PM EDT |

| Tampa Hackerspace • Tampa, FL | Make a LED Edge Lit Name Plaque (Members Only) | Thu, May 25 · 7:00 PM EDT |

| IIBA Tampa Bay • Tampa, FL | BABOK IIBA Tampa Bay Study Group | Thu, May 25 · 7:00 PM EDT |

| Tampa Bay Technology Center • Clearwater, FL | WordPress | Thu, May 25 · 7:00 PM EDT |

| WordPress Clearwater FL • Clearwater, FL | WordPress Monthly Meetup | Thu, May 25 · 7:00 PM EDT |

| The Pinellas County Young “Professionals” • Saint Petersburg, FL | Real Life Tinder – SPEED DATING – Ages 25-40 | Thu, May 25 · 7:00 PM EDT |

| Sunshine Games • Tampa, FL | FABulous Thursdays | Thu, May 25 · 7:00 PM EDT |

| TB Chess – Tampa Bay – St. Petersburg Chess Meetup Group • Saint Petersburg, FL | Thursday chess at 54th Ave Kava House! | Thu, May 25 · 7:00 PM EDT |

| Tampa – Sarasota – Venice Trivia & Quiz Meetup • Bradenton, FL | Smartphone Trivia Game Show at Wilders Pizza | Thu, May 25 · 7:00 PM EDT |

| Nerdbrew Events • Tampa, FL | FOWL PLAY @ Gangchu! | Thu, May 25 · 7:00 PM EDT |

| Cocktails and Convo: Book club for RomCom Lovers • Pinellas Park, FL | Cocktails and Convos social hour | Thu, May 25 · 7:00 PM EDT |

| Live streaming production and talent • Tarpon Springs, FL | Live streaming production and talent | Thu, May 25 · 7:00 PM EDT |

| Indica Cafe Events • Tampa, FL | Dungeons and Dragons Thursday Nights at 7 | Thu, May 25 · 7:00 PM EDT |

| Nerd Night Out • Tampa, FL | Seminole Heights Thurs Game Night @ Gangchu! | Thu, May 25 · 7:00 PM EDT |

| Tampa Bay Bitcoin • Tampa, FL | Bitcoin Social | Thu, May 25 · 7:30 PM EDT |

| St. Pete Booze and Book Club • Saint Petersburg, FL | The Only Good Indians – Stephen Graham Jones | Thu, May 25 · 7:30 PM EDT |

| Plant City Web Development Group • Plant City, FL | Weekly Meetup | Thu, May 25 · 8:00 PM EDT |

| Florida AWS Security Users Meetup Group • Sarasota, FL | AWS New Certified Security Specialty (SCS-C02) – Bootcamp – Week 4 | Thu, May 25 · 8:00 PM EDT |

Once again, the event is the 4th annual Tampa Bay end-of-year tech meetup, and it’s happening on Wednesday, December 11 at 5:30 p.m. at Embarc Collective. We’ll see you there!

Once again, the event is the 4th annual Tampa Bay end-of-year tech meetup, and it’s happening on Wednesday, December 11 at 5:30 p.m. at Embarc Collective. We’ll see you there!