This is just a reminder that there’s a Global Nerdy YouTube channel. I’m ramping up video production, so expect to see a lot more stuff there soon!

This is just a reminder that there’s a Global Nerdy YouTube channel. I’m ramping up video production, so expect to see a lot more stuff there soon!

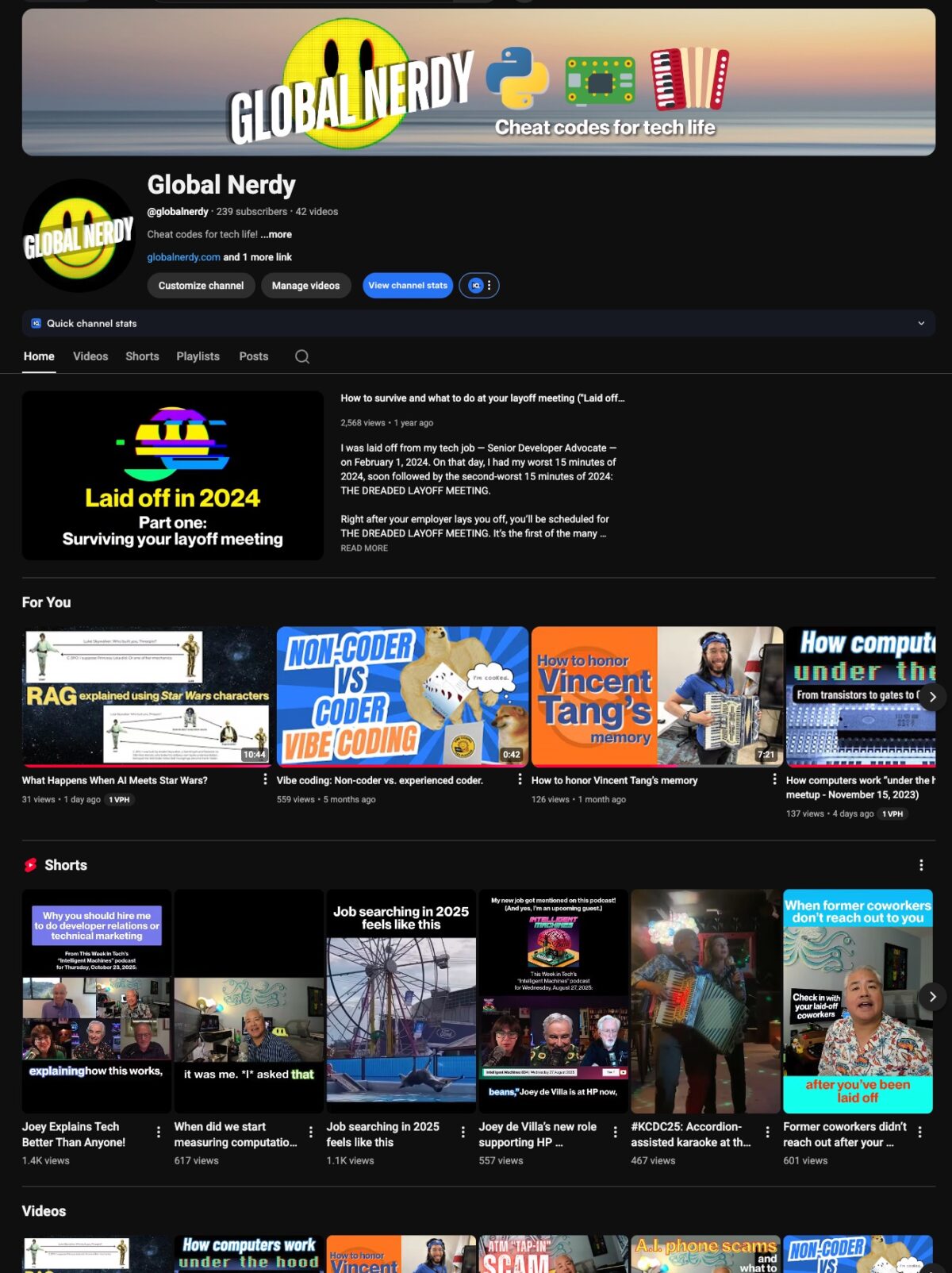

Do you know how your computer works? If not, this video’s for you!

Here’s the video, which is the latest one on the Global Nerdy YouTube channel:

The video features the How Computers Work “Under the Hood” presentation that I gave at a Tampa Devs meetup on November 15, 2023.

In the presentation, I start by talking about the CPU chips in our computers, phones, and electronic devices:

…and then proceed to talk about the building blocks for these chips, transistors:

Then, after a quick introduction to the 6502 processor, which powered a lot of 1980s home computers…

…I introduced 6502 assembly language programming:

Watch the video, and learn how your computer works “under the hood!”

If you’d like to follow along with the video try out the exercises I demonstrated, you can do so from the comfort of your own browser — just follow this guide!

Want the slides for my presentation? Here they are!

The newest video on the Global Nerdy YouTube channel is now online! Its title will and thumbnail will evolve over the next couple of days, but as I write this (the evening of Sunday, August 11, 2024), the thumbnail looks like the one above and the title is Surviving a Layoff: Mental Health Tips & Tricks.

(YouTube titles and thumbnails can be changed even after the video is posted, and many YouTubers change them as they figure out which versions attract “search” and “browse” viewers.)

Near the start of the video, I suggest to viewers that they try to come up with their own mantra to help them through their layoff journey:

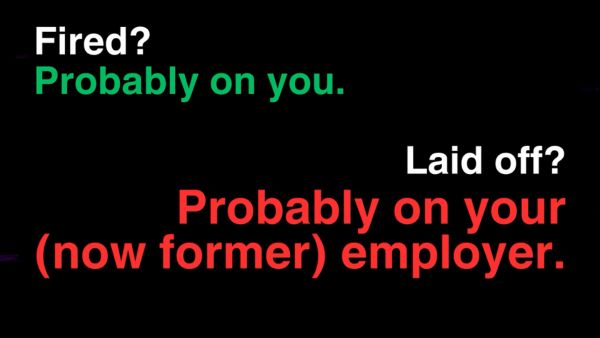

I also remind viewers that there’s a difference between being fired and being laid off:

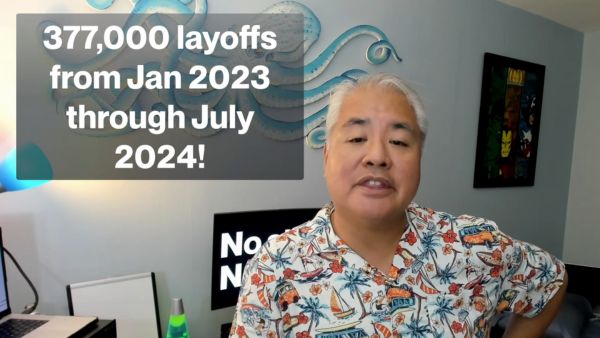

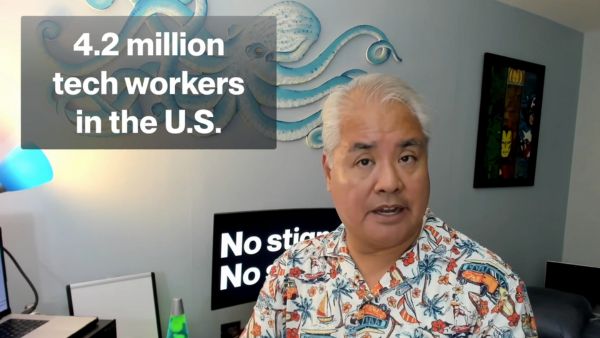

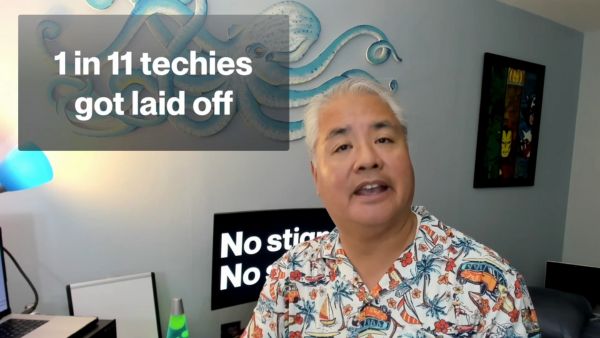

Here are some layoff stats to reassure you that if you’ve been laid off, you’re not alone:

Making things worse is the fact that shareholders love layoffs — they’re cost savings, which can boost stock prices:

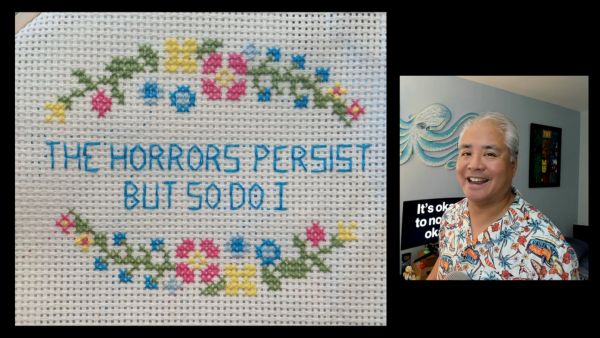

Remember this motto:

I also go through some of the items in the Life Events Inventory, a ranked list of the most stressful events in life. Guess where getting laid off is in the list — I won’t show you kere, though; you’ll have to watch the video!

Here’s the most pithy advice I have for expressing the emotions you may have in the aftermath of being laid off, courtesy of Scott Hanselman:

I talk about the benefits of exercise…

…remind the viewer that it’s always 5 p.m. somewhere…

…and yes, I make a reference not just to “That Site,” but That Site’s identifying drum riff:

You don’t need a unicorn gratitude journal to make it through a layoff, but you should practice gratitude to help you through the process:

I suggest that it might be therapeutic to get rid of at least some of your (former) company swag, but hang on to the stuff that’s useful. I’m hanging on to the Patagonia sweater they sent to me (ironically, a week or so before they laid me off) because it’s nice and warm, and I’m willing to put up with the “VC Bro’ vibes it gives off:

And finally, here’s one of the images I use to explain that if you need therapy or counseling, get it:

The latest video on the Global Nerdy YouTube channel is The CrowdStrike Outage Explained!

How did the CrowdStrike Bug of July 19, 2024 take down 8.5 million Windows systems and cause the biggest global outage of all time? I’ll explain in this video, where you’ll also learn about operating systems, the kernel, device drivers, and more!

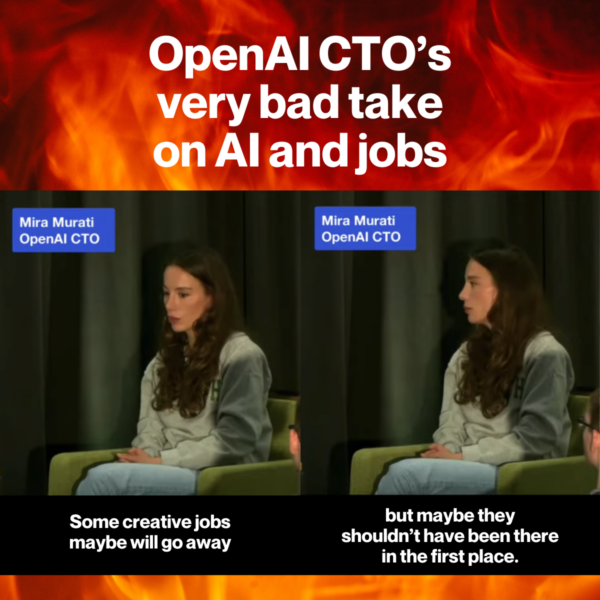

Some techies hold the attitude that “what I do is important, and what you do isn’t,” and the more socially savvy ones don’t say the quiet part out loud.

But Mira Murati, OpenAI’s CTO, did just that onstage at her alma mater, Dartmouth University, where she said this about AI displacing jobs in creative lines of work:

Some creative jobs maybe will go away, but maybe they shouldn’t have been there in the first place.

Mira Murati, from AI Everywhere: Transforming Our World, Empowering Humanity

(she says this around the 29:30 mark)

Here’s my take on her bad take, courtesy of the Global Nerdy YouTube channel, which you should subscribe to…

…and here’s the video with her full talk at Dartmouth:

The laws of time, effort, and experience make it very clear: I’m in the middle of making my worst videos right now, and you’ll want to subscribe to see how bad they are!

Come check out the awfulness on the Global Nerdy YouTube channel, located at youtube.com/@GlobalNerdy!

I’ve already posted the first two videos. The first is a short that looks at an odd paragraph in an O’Reilly article on AI…

…and the second is a blast from the past — a promotional video featuring images of a lot of top-tier developers, followed by an image that’s supposed to represent you, the everyday developer…and guess whose image they used:

There’ll be a mix of short- and long-form videos, where I’ll cover software development topics and technology news in interesting, unusual, and amusing ways.

I’m spending the month of June working on the first set of videos, which I’ll release as quickly as I can, so you know they’ll be bad. And if you’re thinking “But HOW bad?”, there’s only one way to find out: visit the channel and subscribe!

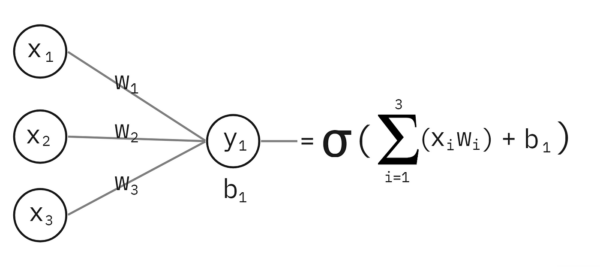

If you’ve tried to go past the APIs like the ones OpenAI offers and learn how they work “under the hood” by trying to build your own neural network, you might find yourself hitting a wall when the material opens with equations like this:

How can you learn how neural networks — or more accurately, artificial neural networks — do what they do without a degree in math, computer science, or engineering?

There are a couple of ways:

Along the way, both I (in this blog) and Tariq (in his book) will trick you into learning a little science, a little math, and a little Python programming. In the end, you’ll understand the diagram above!

One more thing: if you prefer your learning via video…