On Thursday, May 8th from 11 a.m. to 3:00 p.m. Eastern, O’Reilly Media will host a free online conference called AI Codecon. “Join us to explore the future of AI-enabled development,” the tagline reads, and their description of the event starts with their belief that AI’s advance does NOT mean the end of programming as a career, but a transition.

On Thursday, May 8th from 11 a.m. to 3:00 p.m. Eastern, O’Reilly Media will host a free online conference called AI Codecon. “Join us to explore the future of AI-enabled development,” the tagline reads, and their description of the event starts with their belief that AI’s advance does NOT mean the end of programming as a career, but a transition.

Here’s what I plan to do with this event:

- Register for the event

- Log in when it starts and fire up a screen recorder

- Watch the event in the background while working

- Generate a transcript from the recording and feed it into a couple of LLM

- Have the LLMs answer any questions I may have and generate summaries and “going forward” game plans based on the content and my future plans

The agenda for AI Codecon

Here’s the schedule for AI Codecon, which is still being finalized as I write this:

- Introduction, with Tim O’Reilly (10 minutes)

- Gergely “Pragmatic Engineer” Orosz and Addy Osmani Fireside Chat (20 minutes)

Addy Osmani for an insightful discussion on the evolving role of AI in software engineering and how it’s paving the way for a new era of agentic, “AI-first” development.

- Vibe Coding: More Experiments, More Care – Kent Beck (15 minutes)

Augmented coding deprecates formerly leveraged skills such as language expertise, and amplifies vision, strategy, task breakdown, and feedback loops. Kent Beck, creator of Extreme Programming, tells you what he’s doing and the principles guiding his choices.

- Junior Developers and Generative AI – Camille Fournier, Avi Flombaum, and Maxi Ferreira (15 minutes)

Is bypassing junior engineers a recipe for short-term gain but long-term instability? Or is it a necessary evolution in a high-efficiency world? Hear three experts discuss the trade-offs in team composition, mentorship, and organizational health in an AI-augmented industry.

- My LLM Codegen Workflow at the Moment – Harper Reed (15 minutes)

Technologist Harper Reed takes you through his LLM-based code generation workflow and shows how to integrate various tools like Claude and Aider, gaining insights into optimizing LLMs for real-world development scenarios, leading to faster and more reliable code production. - Jay Parikh and Gergely Orosz Fireside Chat (15 minutes)

Jay Parikh, executive vice president at Microsoft, and Gergely Orosz, author of The Pragmatic Engineer, discuss AI’s role as the “third runtime,” the lessons from past technological shifts, and why software development isn’t disappearing—it’s evolving. - The Role of Developer Skills in Today’s AI-Assisted World – Birgitta Böckeler (15 minutes)

Birgitta Böckeler, global lead for AI-assisted software delivery at Thoughtworks, highlights instances where human intervention remains essential, based on firsthand experiences. These examples can inform how far we are from “hands-free” AI-generated software and the skills that remain essential, even with AI in the copilot seat. - Modern Day Mashups: How AI Agents Are Reviving the Programmable Web – Angie Jones (5 minutes)

Angie Jones, global vice president of developer relations at Block, explores how AI agents are bringing fun and creativity back to software development and giving new life to the “programmable web.” - Tipping AI Code Generation on its Side – Craig McLuckie (5 minutes)

The current wave of AI code generation tools are closed, vertically integrated solutions. The next wave will be open, horizontally aligned systems. Craig McLuckie explores this transformation, why it needs to happen, and how it will be led by the community. - Prompt Engineering as a Core Dev Skill: Techniques for Getting High-Quality Code from LLMs – Patty O’Callaghan (5 minutes)

Patty O’Callaghan highlights practical techniques to help teams generate high-quality code with AI tools, including an “architecture-first” prompting method that ensures AI-generated code aligns with existing systems, contextual scaffolding techniques to help LLMs work with complex codebases, and the use of task-specific prompts for coding, debugging, and refactoring. - Chip Huyen and swyx Fireside Chat (20 minutes)

Chip Huyen will delve [Aha! An AI wrote this! — Joey] into the practical challenges and emerging best practices for building real-world AI applications, with a focus on how foundation models are enabling a new era of autonomous agents.

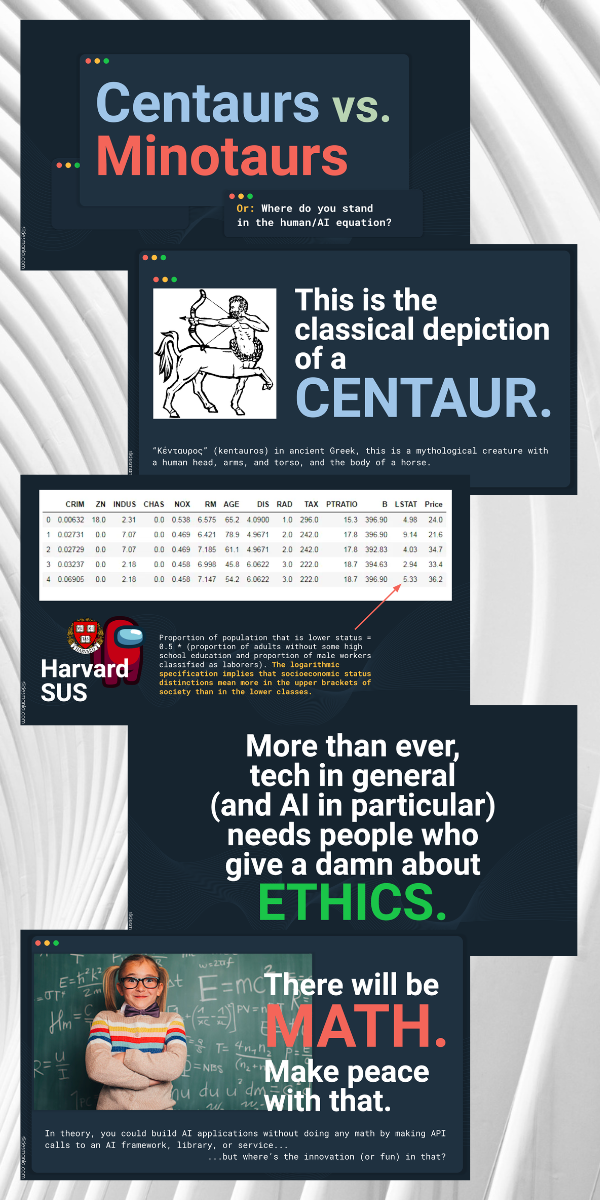

- Bridging the AI Learning Gap: Teaching Developers to Think with AI – Andrew Stellman (15 minutes)

Andrew Stellman, software developer and author of Head First C#, shares lessons from Sens-AI, a learning path built specifically for early-career developers, and offers insights into the gap between junior and senior engineers. - Lessons Learned Vibe Coding and Vibe Debugging a Chrome Extension with Windsurf – Iyanuoluwa Ajao (5 minutes)

Software and AI engineer Iyanuoluwa Ajao explores the quirks of extension development and how to vibe code one from scratch. You’ll learn how chrome extensions work under the hood, how to vibe code an extension by thinking in flows and files, and how to vibe debug using dependency mapping and other techniques. - Designing Intelligent AI for Autonomous Action – Nikola Balic (5 minutes)

Nikola Balic, head of growth at VC-funded startup Daytona, will show through case studies like AI-powered code generation and autonomous coding, you’ll learn key patterns for balancing speed, safety, and strategic decision-making—and gain a road map for catapulting legacy systems into agent-driven platforms. - Secure the AI: Protect the Electric Sheep – Brett Smith (5 minutes)

Distinguished software architect, engineer, and developer Brett Smith discusses AI security risks to the software supply chain, covering attack vectors, how they relate to the OWASP Top 10 for LLMs, and how they tie into scenarios in CI/CD pipelines. You’ll learn techniques for closing the attack vectors and protecting your pipelines, software, and customers. - How Does GenAI Affect Developer Productivity? – Chelsea Troy (15 minutes)

The advent of consumer-facing generative models in 2021 catalyzed a massive experiment in production on our technical landscape. A few years in, we’re starting to see published research on the results of that experiment. Join Chelsea Troy, leader of Mozilla’s MLOps team, for a tour through the current findings and a few summative thoughts about the future. - Eval Engineering: The End of Machine Learning Engineering as We Know It – Lili Jiang (15 minutes)

Lili Jiang, former Waymo evaluation leader, reveals how LLMs are transforming ML engineering. Discover why evaluation is becoming the new frontier of ML expertise, how eval metrics are evolving into sophisticated algorithms, and why measuring deltas instead of absolute performance creates powerful development flywheels. - Closing Remarks – Tim O’Reilly (10 minutes)