Last week, Anitra and I attended both the Dev/Nexus conference and its companion conference, Advantage, an AI conference for CTOs, CIOs, VPs of Engineering, and other technical lead-types, which took place the day before Dev/Nexus. My thanks to Pratik Patel for the guest passes to both conferences!

I took copious notes and photos of all the Advantage talks and will be posting summaries here. This first set of notes is from the first talk, A Leader’s Playbook for AI, presented by Frank Greco.

Frank is a senior technology consultant and enterprise architect working on cloud and AI/ML tools for developers. He is a Java Champion, Chairman of the NYJavaSIG (first JUG ever), and runs the International Machine Learning for the Enterprise conference in Europe. Co-author of JSR 381 Visual Recognition for Java API standard and strong advocate for Java and Machine Learning. Member of the NullPointers. #STEAMnotSTEM

And here’s the abstract of his talk:

AI is no longer a side experiment. It is quickly becoming a standard part of enterprise IT, both in how systems get built and how teams get work done. For CIOs, CTOs, and team leads, the hard part is figuring out which AI efforts will actually pay off without creating unnecessary risk for the company. In this session, you will get a practical way to pick the right first pilots, define success metrics that matter, and avoid the most common traps. Those traps include leaking sensitive data, getting unreliable output, having no clear owner, and running pilots that never turn into real ROI. We will talk about how AI tools fit into everyday team workflows, how to balance value and risk so you know where to start, and what guardrails to put in place from day one. That includes data boundaries, human oversight, auditability, evaluation, and safe fallback behavior. You will leave with a simple checklist and an action plan you can use right away to launch a secure, measurable AI pilot that your team can ship and your organization can scale.

My notes from Frank’s talk are below.

Most people don’t know what AI is, and that’s okay

One of the most reassuring moments in Frank’s talk came early. It was a reality check about how widely AI is actually understood. Frank pushed back against the anxiety many tech leaders feel about falling behind, pointing out that most people (which includes plenty of CTOs at large companies) genuinely don’t know what generative AI is or how to use it effectively. The adoption curve is far flatter than the hype suggests, and people inside the I.T. bubble consistently overestimate how much the rest of the world has embraced this technology.

This doesn’t mean complacency is wise, but it does mean leaders can take a breath before making reactive, poorly-considered AI investments. The real competitive advantage is in taking the time to actually understand AI instead of rushing in blindly. Frank’s argument is that leaders who build foundational knowledge now will be far better positioned than those who bolt on AI tools under pressure and learn nothing durable in the process.

Three pillars of an AI strategy

Frank outlined a clean, actionable framework for leaders thinking about where to start: AI/business strategy, understanding the core technology, and implementation.

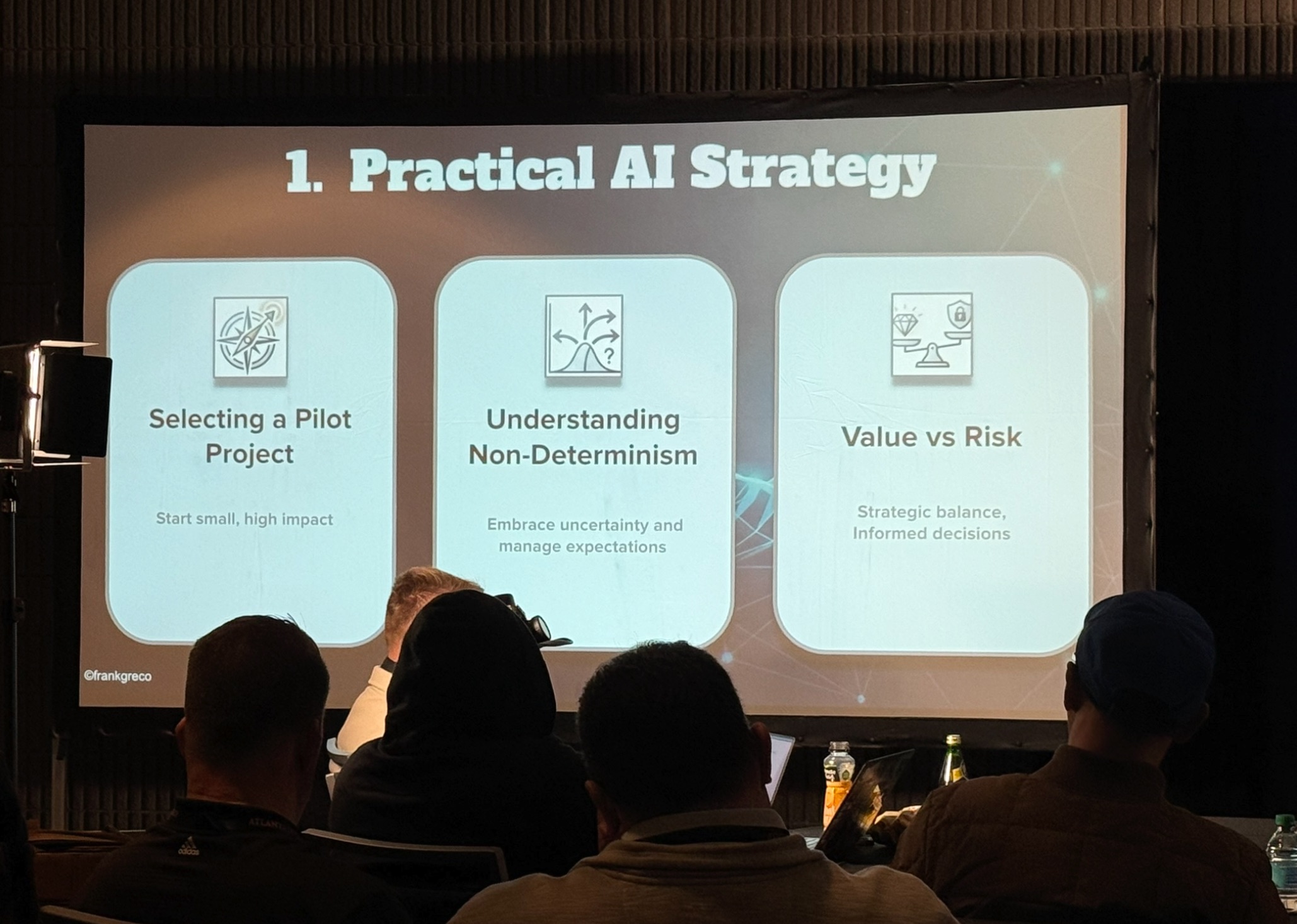

The first pillar is the business strategy. It’s about deciding what problem you’re actually trying to solve with AI, and why it matters to your organization. Without that anchor, AI initiatives tend to drift toward whatever is technically interesting rather than what’s genuinely valuable.

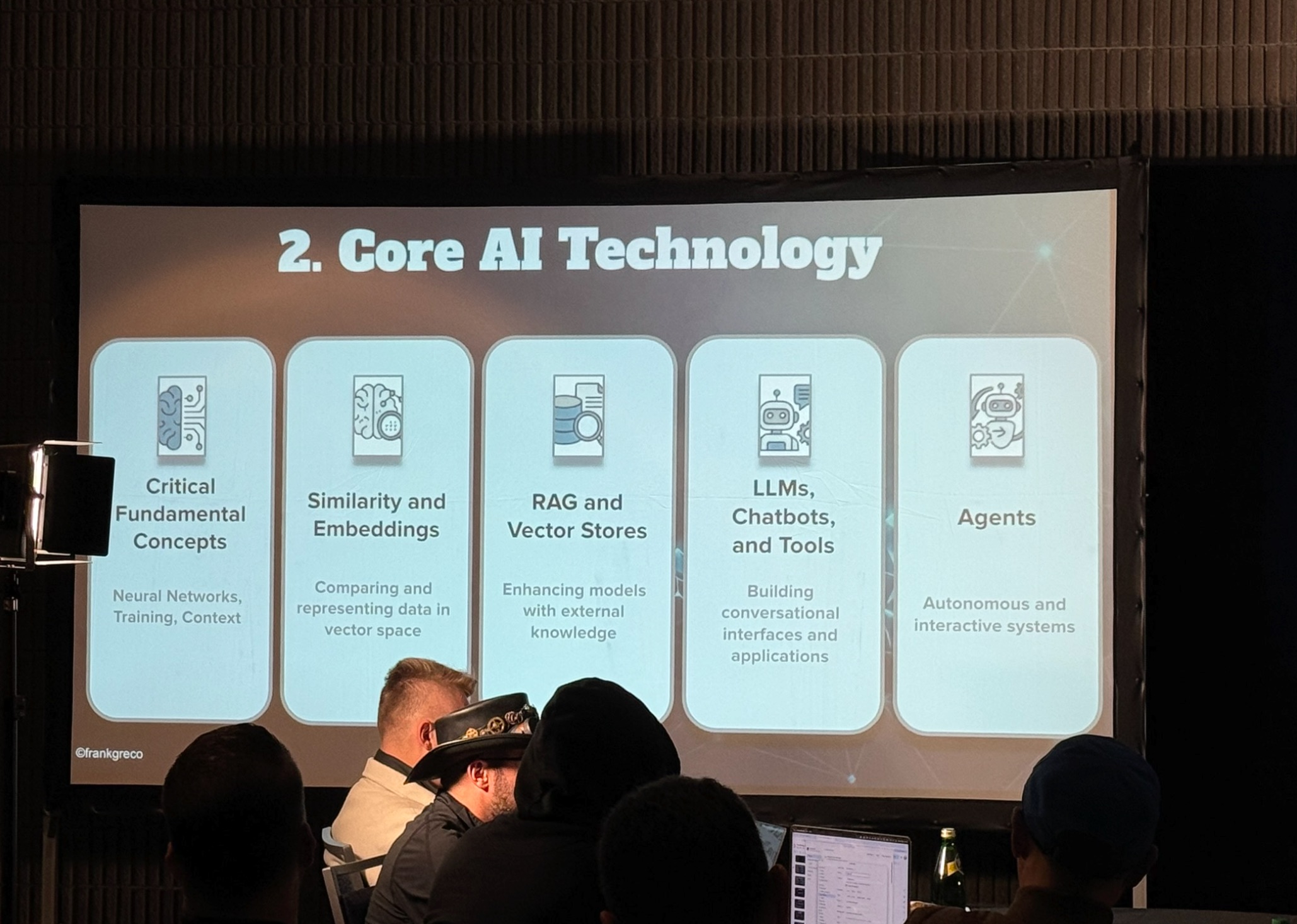

The second pillar, understanding the core technology, is where Frank pushed hardest. He argued that even developers often treat generative AI like just another framework to learn, which fundamentally misunderstands what makes it different.

LLMs are non-deterministic. Given the same input, they can produce different outputs, which is a conceptual break from over 60 years of computing where the same data reliably produced the same result. Leaders who don’t grasp this distinction will struggle to set appropriate expectations, evaluate outputs, or assess risk.

The third pillar, implementation, is where strategy meets reality. Frank recommended starting with a pilot project that is useful but not mission-critical. It should be something meaningful enough to teach you real lessons, but not so central to operations that failure results in dire consequences. It’s similar to how most organizations handled the move to cloud, where they didn’t migrate their core banking system first, but instead learned on something lower-stakes, built confidence and competency, and scaled from there.

The security and legal risk nobody is taking seriously enough

Frank was emphatic on one point that he felt wasn’t getting enough attention: LLMs are inherently insecure, and organizations need to treat them that way from day one. He demonstrated this himself, describing how he was able to manipulate a chatbot into behaving like a pizzeria employee simply by using prompt injection. The bigger concern today is AI coding assistants that incorporate third-party skills and prompts without vetting them, potentially executing malicious code inside a developer’s environment.

The legal dimension is equally underappreciated. Frank flagged recent changes to platform liability law that affect companies deploying chatbots. Where organizations once had certain protections if a third party misused a service, that shield has eroded. If someone misuses your company’s chatbot, the legal exposure may now land squarely on you. His advice was direct: before deploying any customer-facing AI, talk to your lawyers.

Data privacy is another key risk. Frank noted that roughly 90% of people using AI tools at work don’t realize they’re sending potentially sensitive data to an external service. An employee typing internal business details into a public chatbot is effectively sharing that information with a third party, regardless of what the vendor’s terms of service say about data use. Vendors get acquired, policies change, and by then the data is already out there.

Build an internal AI “center of gravity”

Frank’s final set of recommendations centered on organizational structure rather than technology. His experience educating middle management at Google taught him that top-down mandates to “use AI” rarely work. People need to see practical, relatable examples of AI making their actual jobs easier before they engage. The model that worked at Google was a recurring internal showcase: a weekly lunch where AI practitioners demonstrated small, concrete wins to colleagues across the organization. Over time, 500 people were showing up voluntarily.

The broader lesson is that companies should deliberately build a team of internal AI experts who can shepherd the technology across the organization and serve as resources, translators, and guardrails simultaneously. This goes beyond training developers. It’s about creating the infrastructure for responsible adoption at every level. That includes establishing model risk management practices, particularly in regulated industries like financial services and healthcare, where the consequences of a non-deterministic system making a wrong call can be severe.

Finally, measure the ROI. If you can’t demonstrate that your AI initiatives are delivering value, you can’t justify continued investment or make the case for scaling them. Leaders who want AI to take root in their organizations need to make the results visible (successes and failures) so the organization can learn and iterate rather than just chase the next tool.