Here’s the fourth in my series of notes taken from keynotes at CUSEC 2010, the 2010 edition of the Canadian University Software Engineering Conference. These are from Bits of Evidence: What we actually know about software development and why we believe it’s true, a keynote given by my friend Greg Wilson, the computer science prof we all wish we had. He’s also the guy who gave me my shot at my first article for a developer magazine, a review of a couple of Ajax books in Software Development.

My notes from his keynote appear below; he’s posted his slides online.

A Little Family History

- My great-grandfather on my father’s side came from Australia

- He was sent there, along with many other criminals from the UK, to Botany Bay

- Whenever we kids did anything bad, my mother would say to my father: “This your side of the family"

- This happened until the day my sister triumphant discovered that my maternal great-grandfather was a Methodist minister who ran off with his church’s money and a 15-year-old girl from his parish

- She never brought up my father’s family history again

- It took years and poring over 70,000 sheets of microfiche to track down my great-grandfather, all to find two lines, which said he was sentenced, but not why

- These days, students get upset if it takes more than 15 seconds to find answers to last year’s exam

- Some things haven’t changed: while technology has improved, the way we develop software for it hasn’t

Scurvy

- In the Seven Years’ War, which lasted longer than seven years (1754-63), Britian lost:

- 1,512 sailors to enemy action

- 100,000 sailors to scurvy

- Scurvy’s a really ugly disease. You get spots on your skin, your gums puff up and go black, you bleed from your mucous membranes, and then the really bad stuff happens

- In 1747, a Scotsman named James Lind conducted what might have been the first-ever controlled medical experiment

- Pickled food keeps fresh, he reasoned, how about pickled sailors?

- Lind tried giving different groups of sailors with scurvy various acidic solutions:

- Cider

- Sulfuric acid

- Vinegar

- Sea water (this was the control)

- Oranges

- Barley water

- The sailors who had the oranges were the ones who recovered

- Despite Lind’s discovery, nobody paid attention until a proper Englishman repeated the experiment in 1794

- This discover probably won the Napoleonic Wars: the British navy was the deciding factor

- As a result of this discovery, British sailors planted lime trees at their ports of call and ate the fruit regularly; it’s how the term “limey” got applied to them, and later by association to British people in general

Lung Cancer

- It took a long time for medical science to figure out that controlled studies were good

- In the 1920s, there was an epidemic of lung cancer, and no one knew the cause

- There were a number of new things that had been introduced, so any of them could be blamed – was it cars? Cigarettes? Electricity?

- In the 1950s, the researchers Hill and Doll took British doctors and split them into 2 groups:

- Smokers

- Non-smokers

- The two discoveries to come from their research were:

- It is unequivocal that smoking causes lung cancer

- Many people would rather fail than change

- In response to the study, the head of the British medical association said: "What happens ‘on average’ is of no help when one is faced with a specific patient"

- The important lesson is to ask a question carefully and be willing to accept the answer, no matter how much you don’t like it

Evidence-Based Medicine

- In 1992, David Sackett of McMaster University coined the term "evidence-based medicine"

- As a result, randomized double-blind trials are accepted as the gold standard for medical research

- Cochrane.org now archives results from hundreds of medical studies conducted to that standard

- Doing this was possible before the internet, but the internet brings it to a wider audience

- You can go to Cochrane.org, look at the data and search for cause and effect

Evidence-Based Development?

- That’s well and good for medicine. How about programming?

- Consider this quote by Martin Fowler (from IEEE Software, July/August 2009):

”[Using domain-specific languages] leads to two primary benefits. The first and simplest, is improved programmer productivity… The second…is…communication with domain experts.” - What just happened?

- One of the smartest guys in our industry

- Made 2 substantive claims

- In an academic journal

- Without a single citation

- (I’m not disagreeing with his claims – I just want to point out that even the best of us aren’t doing what we expect the makers of acne creams to do)

- Maybe we need to borrow from the Scottish legal system, where a jury can return one of three verdicts:

- Innocent

- Guilty

- Not proven

- Another Martin Fowler line:

”Debate still continues about how valuable DSLs are in practice. I believe debate is hampered because not enough people know how to use DSLs effectively.” - I think debate is hampered by low standards of proof

Hope

- The good news is that things have started to improve

- There’s been a growing emphasis on empirical studies in software engineering research since the mid-1990s

- At ICSE 2009, there were a number of papers describing new tools or practices routinely including results from some kind of test study

- Many of these studies are flawed or incomplete, but standards are constantly improving

- It’s almost impossible to write a paper on a new tool or technology without trying it out in the real world

- There’s the matter of the bias in the typical guinea pigs for these studies: undergrads who are hungry for free pizza

My Favourite Little Result

- Anchoring and Adjustment in Software Estimation, a 2005 paper by Aranda and Easterbrook

- They posed this question to programmers:

”How long do you think it will take to make a change to this program?”- The control group was also told:

”I have no experience estimating. We’ll wait for your calculations for an estimate.” - Experiment group A was told:

”I have no experience estimating, but I guess this will take 2 months to finish.” - Experiment group B was told:

”I have no experience estimating, but I guess this will take 20 months to finish.”

- The control group was also told:

- Here were the groups’ estimates:

- Group A, the lowball estimate: 5.1 months

- Control group: 7.8 months

- Group B, the highball estimate: 15.4 months

- The anchor – the “I guess it will take x months to finish” — mattered more than experience.

- It was a small hint, hint buried in the middle of the requirements, but it still had a big effect, regardless of estimation method or anything else

- Engineers give back what they think we want to hear

- Gantt charts, which are driven by these estimates, often end up being just wild-ass guesses in nice chart form

- Are agile projects similarly affected, just on a shorter and more rapid cycle?

- Do you become more percentage-accurate when estimating shorter things?

- There’s no data to back it up!

Frequently Misquoted

- You’ve probably heard this in one form or another:

”The best programmers are up to 28 times more productive than the worst.” - It’s from Sackman, Erikson and Grant’s 1968 paper, Exploratory experimental studies comparing online and offline programming performance

- This quote often has the factor changed – I’ve seen 10, 40, 100, or whatever large number pops into the author’s head

- Problems with the study:

- The study was done in 1968 and was meant to compare batch vs. interactive programming

- Does batch programming resemble interactive programming?

- None of the programmers had any formal training in computer programming because none existed then

- (Although formal training isn’t always necessary – one of the best programmers I know was a rabbinical student, who said that all the arguing over the precise meaning of things is old hat to rabbis: “We’ve been doing this for much longer than you”.)

- What definition of “productivity” were they using? How was it measured?

- Comparing the best in any class to the worst in the same class exaggerates any effect

- Consider comparing the best driver to the worst driver: the worst driver is dead!

- Too small a sample size, too short an experimental period: 12 programmers for an afternoon

- The next similar “major” study was done with 54 programmers, for “up to an hour”

- The study was done in 1968 and was meant to compare batch vs. interactive programming

So What Do We Know?

- Look at Lutz Prechelt’s work on:

- Productivity variations between programmers

- Effects of language

- Effects of web programming frameworks

- Things his studies have confirmed:

- Productivity and reliability depend on the length of program’s text, independent of language level

- Output in text per hour is constant [Joey’s note: Corbato’s Law!]

- The take-away: Use the highest-level language you can!

- Might not always be possible: "Platform-independent programs have platform-independent performance"

- Might require using more/faster/better hardware to compensate

- That’s engineering – it’s what happens when you take science and economics and put them together

- Discoveries from Boehm, McClean and Urfrig’s (1975) Some Experience with Automated Aids to the Design of Large-Scale Reliable Software:

- Most errors are introduced during requirements analysis and design

- The later a bug is removed, the more expensive it is to take it out

- This explains the two major schools of software development:

- Pessimistic, big-design-up-front school: “If we tackle the hump in the error injection curve, fewer bugs get into the fixing curve”

- Optimistic, agilista school: Lots of short iterations means the total cost of fixing bugs go down

-

Who’s right? If we find out, we can build methodologies on facts rather than best-sellers\

Why This Matters

- Too many people make the "unrefuted hypothesis based on personal observation"

- Consider this conversation:

- A: I’ve always believed that there are just fundamental differences between the sexes

- B: What data are you basing that opinion on?

- A: It’s more of an unfuted hypothesis based on personal observation. I have read a few studies on the topic and I found them unconvincing…

- B: Which studies were those?

- A: [No reply]

- Luckily, there’s a grown-up version of this conversation, and it takes place in the book Why Aren’t More Women in Science? Top Researchers Debate the Evidence (edited by Ceci and Williams)

- It’s an informed debate on nature vs. nurture

- It’s a grown-up conversation between:

- People who’ve studied the subject

- Who are intimately familiar with the work of the other people in the field with whom they are debating

- Who are merciless in picking apart flaws in each other’s logic

- It looks at:

- Changes in gendered SAT-M scores over 20 years

- Workload distribution from the mid-20s to early 40s

- The Dweck effect

- Have 2 groups do a novel task

- Tell group A that success in performing the task is based on inherent aptitude

- Tell group B that success comes from practice

- Both groups will be primed by the suggestions and “fulfill the prophecy”

- We send strong signals to students that programming is a skill inherent to males, which is why programming conferences are sausage parties

- Facts, data and logic

Some Things We Know (and have proven)

Increase the problem complexity 25%, and you double the solution complexity. (Woodfield, 1979)

The two biggest causes of project failure (van Genuchten et al, 1991):

- Poor estimation

- Unstable requirements

If more than 20 – 25% of a component has to be revised, it’s better to rewrite it from scratch. (Thomas et al, 1997)

- Caveats for this one:

- It comes from a group at Boeing

- Applies to software for flight avionics, a class of development with stringent safety requirements

- Haven’t seen it replicated

Rigorous inspections can remove 60 – 90% of errors before the first test is run (Fagan, 1975)

- Study conducted at IBM

- Practical upshot: Hour for hour, the most effective way to get rid of bugs is to read the code!

Cohen 2006: All the value in a code review comes from the first reader in the first hour

- 2 or more people reviewing isn’t economically effective

- Also, after an hour, your brain is full

- Should progress be made in small steps? Look at successful open source projects

Shouldn’t our development practices be built around these facts?

Conway’s Law – often paraphrased as “A system reflects the organizational structure that built it” — was meant to be a joke

- Turns out to be true

- Jim Herbsleb et al proved it in 1999

Nagappan et al (2007) and Bird et al (2009) got their hands on data collected during the development of Windows Vista and learned:

- Physical distance doesn’t affect post-release fault rates

- Distance in organizational chart does

- If two programmers are far apart in the org chart, their managers probably have different goals [Joey’s note: especially in companies like Microsoft where performance evaluations are metrics-driven]

- Explains why big companies have big problems

- I remember once being told by someone from a big company that "we can’t just have people running around doing the right thing."

Two Steps Forward

- Progress sometimes means saying "oops"

- We once thought that code metrics could predict post-release fault rates until El Emam et al (2001): The Confounding Effector of Size on the Validity of Object-Oriented Metrics, where it was revealed that":

- Most metrics values increase with code size

- If you do a double-barrelled correlation and separate the effect of lines of code from the effect of the metric, lines of code accounts for all the signal

- It’s a powerful and useful result, even if it’s disappointing

- It raises the bar on what constitutes "analysis"

- We’re generating info and better ways to tackle problems

Folk Medicine for Software

- Systematizing and synthesizing colloquial practice has been very productive in other disciplines – examples include:

- Using science to derive new medicines from folk medicine in the Amazon

- Practices in engineering that aren’t documented or taught in school

- There’s a whole lot of stuff that people in industry do as a matter of course that doesn’t happen in school

- If you ask people in a startup what made them a success, they’ll be wrong because they have only one point of data

- If you’re thinking about grad school, it’s this area where we’ll add value

How Do We Get There?

- One way is Beautiful Code, a book I co-edited

- All proceeds from sales of the book go to Amnesty International

- In its 34 chapters, each contributed by a different programmer, they explain a bit of code that they found beautiful

- I asked Rob Pike, who contributed to the development of Unix and C, and he replied "I’ve never seen any beautiful code."

- I asked Brian Kernighan, another guy who contributed to the development of Unix and C, and he picked a regex manager that Rob Pike wrote in C

- This has led to a series of "Beautiful" books:

- The next book is "the book without a name”

- I would’ve called it Beautiful Evidence, but Edward Tufte got there first

- The book will be about "What we know and why we think it’s true"

- Its main point is knowledge transfer

- I’m trying to change the debate

- I want a better textbook, a believe that this will be a more useful textbook

- "I want your generation to be more cynical than it already is."

- I want you to apply the same standards that you would to acne medication

- How many of you trust research paid for by big pharma on their own products?

- I want you to have higher standards for proof

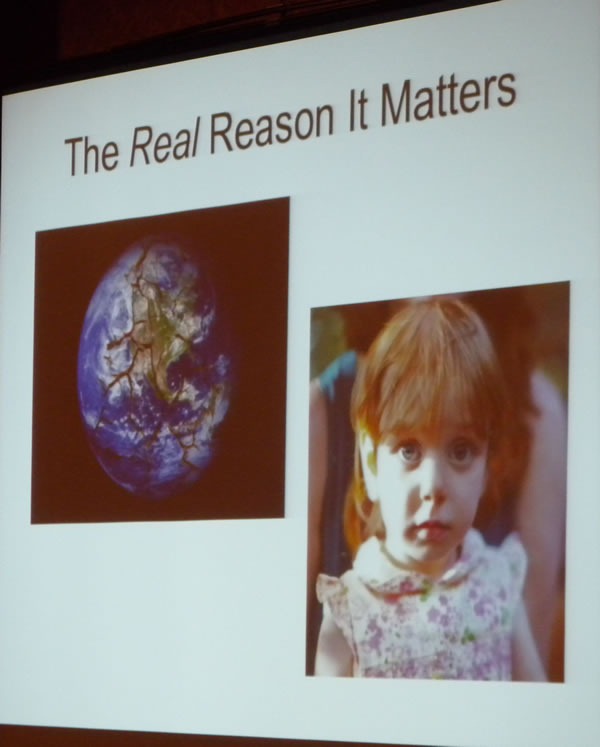

- The real reason all this matters? [Shows a slide with a picture of the world and his daughter]

- “She is empirically the cutest kid in the world”

- Public debate is mostly evidence-free

- You’re not supposed to convict or release without evidence

- I want my daughter to inherit a better world

What University is For

- [Asks the audience] Hands up if you think you know what university is for

- One student answers “A piece of paper!”

- Greg’s reply: "Remember when I said I wanted you to be more cynical? Don’t go that far."

- In my high school in interior BC, there was no senior math or physics course, because there was no interest

- I went to university, hungry to learn

- I later discovered that it wasn’t about the stuff you learned

- Was it supposed to teach us how to learn?

- It’s supposed to train us to ask good questions

- Undergraduates are the price universities pay to get good graduate students

- This thinking got me through the next 15 years

- Then I went back to teaching, at U of T, and I think I know what universities are for now:

- We are trying to teach you how to take over the world.

- You’re going to take over, whether you want to or not.

- You’re inheriting a world we screwed up

- You’ll be making tough decisions, without sufficient experience or information

- Thank you for listening. Good luck.

Comments from the Q&A Session

- Who’s going to an anti-proroguing rally this weekend?

- It won’t do you any good

- You want to make change? It’s not made at rallies, but by working from within

- The reason you can’t teach evolution in Texas isn’t because fundamentalists held rallies, but because they ran for the school board

- We have to do the same

- To make change, you have to get in power and play the game

- “Dreadlocks and a nose ring does not get you into the corridors of power”

- The ACM and IEEE are arguing your case

- Join them and influence them!

- Do we want to turn out engineers, who are legally liable for their work, rather than computer scientists, who are not?

- Some of us will have professional designations and be legally liable, some of us won’t — there’s no one future