The Sorting Hat

I recently interviewed some programmers for a couple of available positions at CTS, the startup crazy enough to take me as its CTO. The interviews started with me giving the candidate a quick overview of the company and the software that we’re hiring people to implement, after which it would be the candidate’s turn to tell his or her story. With the introductions out of the way — usually at the ten- or fifteen-minute mark of the hour-long session, I ran each candidate through the now-infamous “FizzBuzz” programming test:

Write a program that prints out the numbers from 1 through 100, but…

- For numbers that are multiples of 3, print “Fizz” instead of the number.

- For numbers that are multiples of 5, print “Buzz” instead of the number

- For numbers that are multiples of both 3 and 5, print “FizzBuzz” instead of the number.

That’s it!

This was the “sorting hat”: the interview took different tacks depending on whether the candidate could or couldn’t pass this test.

I asked each candidate to do the exercise in the programming language of their choice, with pen and paper rather than on a computer. By requiring them to use pen and paper rather than letting them use a computer, I prevented them from simply Googling a solution. It would also force them to actually think about the solution rather than simply go through the “type in some code / run it and see what happens / type in some more code” cycle. In exchange for taking away the ability for candidates to verify their code, I would be a little more forgiving than the compiler or interpreter, letting missing semicolons or similarly small errors slide.

The candidates sat at my desk and wrote out their code while I kept an eye on the time, with the intention of invoking the Mercy Rule at the ten-minute mark. They were free to ask any questions during the session; the most common was “Is there some sort of trick in the wording of the assignment?” (there isn’t), and the most unusual was “Do you think I should use a loop?”, which nearly made me end the interview right then and there. Had any of them asked to see what some sample output would look like, I would have shown them a text file containing the first 35 lines of output from a working FizzBuzz program.

The candidates sat at my desk and wrote out their code while I kept an eye on the time, with the intention of invoking the Mercy Rule at the ten-minute mark. They were free to ask any questions during the session; the most common was “Is there some sort of trick in the wording of the assignment?” (there isn’t), and the most unusual was “Do you think I should use a loop?”, which nearly made me end the interview right then and there. Had any of them asked to see what some sample output would look like, I would have shown them a text file containing the first 35 lines of output from a working FizzBuzz program.

The interviews concluded last week, after which we tallied the results: 40% of the candidates passed the FizzBuzz test. Worse still, I had to invoke the Mercy Rule for one candidate who hit the ten-minute mark without a solution.

The Legend of FizzBuzz

I was surprised to find that only one of the candidates had heard of FizzBuzz. I was under the impression that that it had worked its way into the developer world’s collective consciousness since Imran Ghory wrote about the test back in January 2007:

On occasion you meet a developer who seems like a solid programmer. They know their theory, they know their language. They can have a reasonable conversation about programming. But once it comes down to actually producing code they just don’t seem to be able to do it well.

You would probably think they’re a good developer if you’ld never seen them code. This is why you have to ask people to write code for you if you really want to see how good they are. It doesn’t matter if their CV looks great or they talk a great talk. If they can’t write code well you probably don’t want them on your team.

After a fair bit of trial and error I’ve come to discover that people who struggle to code don’t just struggle on big problems, or even smallish problems (i.e. write a implementation of a linked list). They struggle with tiny problems.

So I set out to develop questions that can identify this kind of developer and came up with a class of questions I call “FizzBuzz Questions” named after a game children often play (or are made to play) in schools in the UK.

Ghory’s post caught the attention of one of the bright lights of the Ruby and Hacker News community, Reg “Raganwald” Braithwaite. Reg wrote an article titled Don’t Overthink FizzBuzz, which in turn was read by 800-pound gorilla of tech blogging, Jeff “Coding Horror” Atwood. It prompted Atwood to ask why many people who call themselves programmers can’t program. From there, FizzBuzz took on a life of its own — just do a search on the term “FizzBuzz”; there are now lots of blog entries on the topic (including this one now).

In Dave Fayram’s recent article on FizzBuzz (where he took it to strange new heights, using monoids), he observed that FizzBuzz incorporates elements that programs typically perform:

- Iterating over a group of entities,

- accumulating data about that group,

- providing a sane alternative if no data is available, and

- producing output that is meaningful to the user.

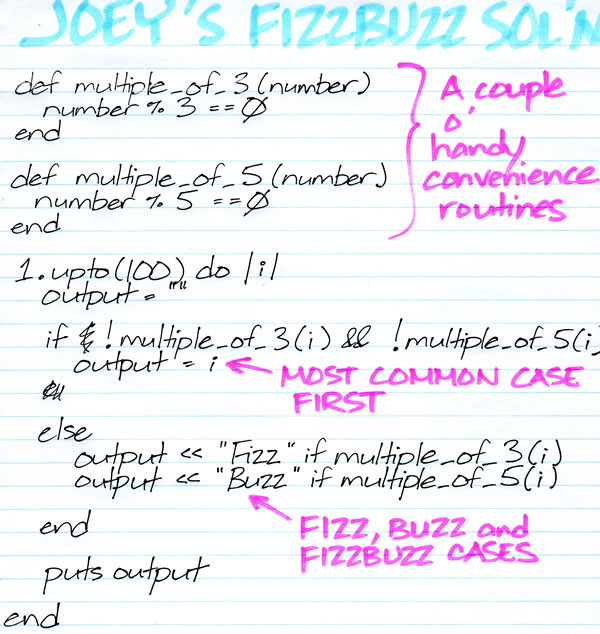

My FizzBuzz Solution

I can’t go fairly judge other programmers with FizzBuzz without going through the test myself. I implemented it in Ruby, my go-to language for hammering out quick solutions, and in the spirit of fairness to those I interviewed, I did it with pen and paper on the first day of the interviews (and confirmed it by entering and running it):

I consider myself a half-decent programmer, and I’m pleased that I could bang out this solution on paper in five minutes. My favourite candidates from our interviews also wrote up their solutions in about the same time.

A Forty Percent Pass Rate?!

Okay, the picture above shows a 33% pass rate. Close enough.

How is it that only 40% of the candidates could write FizzBuzz? Consider that:

- All the candidates had some kind of college- or university-level computer programming education; many had computer science certificates or degrees.

- The candidates had varying degrees of work experience, ranging from years to decades.

- Each candidate could point to a sizeable, completed project on which they worked.

- All the candidates came to us pre-screened by a recruiting company.

A whopping sixty percent of the candidates made it past these “filters” and still failed FizzBuzz, and I’m not sure how. I have some guesses:

- Moving away from programming: Some of them may have started as developers initially, but over time, their work changed. They still hold some kind of programming-related title and their work still has something to do with the applications they were hired to develop, but these days, most of their job involves tasks outside the realm of actually writing code.

- “Copy and paste, adjust to taste”-style programming: Also known as “Coding by Googling”, this is when a developer writes applications by searching for code that’s similar to the problem that s/he’s trying to solve, copy-and-pastes it, and then tweaks it as needed. I get the feeling that a lot of client-side JavaScript is coded this way. This is one of the reasons I insisted that the FizzBuzz exercise be done on paper rather than with a computer.

- Overspecialization: It could be that some candidates have simply been writing the same kind of program over and over, and now do their work “on autopilot”. I’ve heard that FizzBuzz is pretty good at catching this sort of developer unaware.

If you’re interviewing prospective developers, you may want to hit them with the FizzBuzz test and make them do it on paper or on a whiteboard. The test may be “old news” — Imran wrote about it at the start of 2007; that’s twenty internet years ago! — but apparently, it still works for sorting out people who just can’t code.

Be sure to read the follow-up article, Further Into FizzBuzz!

99 replies on “FizzBuzz Still Works”

FizzBuzz tests the ability to read, solve and test a problem in a fairly elegant and apparently effective way.

Contrast this test to the test proposed in the first comment by “Toepopper” here (http://www.codinghorror.com/blog/2007/02/why-cant-programmers-program.html): swap two numbers without using a third variable. A lesser manager may think this is the same sort of thing, but really it’s just “do you know a particular a programming trick”. Entirely different!

Random Thought #2:

When anyone says computers are going to start do our programming for us, this is the first problem to feed it. Of course, the best computer feedable description of the problem is basically the solution…

Random Thought #3:

Have you considered using Facebook for comments, to integrate everything (sorta) together?

Nice article. I tried the test myself on paper. Although I haven’t tried it on a computer yet, the right logic is definitely there.

I definitely agree with programming tests. What I like about your example of FizzBuzz is that it’s a logic test; it’s not specific to a particular product or module. If the person can think like a computer and imagine the code running line-by-line, he/she will pass.

Years ago, I had a challenging PHP test. It wasn’t hard; it just involved using modules I’ve never used before and I had to do some hardcore googling to get around that and I was able to complete it. Back in the day, I had an interview where someone asked me “Can you make a basic version of Facebook?” I don’t remember my answer, but they were making a lot of demands.

Programming is a battlefield with politics and back-stabbing. There’s a lot of demands. Nearly every task where programming was required, I was forced to learn something new on the spot and rummage through someone else’s spaghetti code. It was a tough challenge, despite my prior experience.

Let’s just say that certification and experience are two different things.

When I first saw this on Coding Horror I wondered if the test was weeding out those who can’t code well under pressure as opposed to those who can’t code. Even then, pressure is a fact of life in development and failure to come up with a solution to this problem under pressure is a good indicator of what you are dealing with in general.

We use FizzBuzz as an icebreaker for technical interviews, and I found it astonishing how many people did it wrong (for a junior position a while back we had 100% failure.) I’ve found it’s about an even mix of failing to read the spec (which sometimes gets caught when we ask them to walk through a few iterations, and sometimes not,) and the rest making coding errors or not knowing about the % operator. Oddly, a lot of people (reflexively?) looped from 0 to 100. I’m looking forward to seeing the results from some senior-level interviews next week!

[…] Hacker News http://www.globalnerdy.com/2012/11/15/fizzbuzz-still-works/ This entry was posted in Uncategorized by admin. Bookmark the […]

I’m curious what “failures” look like. Is it complete inability to get anything done at all? Or relatively small mistakes, like ending at 99 instead of 100?

Well, not being a programmer, I have never before heard of this test. But using some python to make some scripts for work (I would qualify as a googling one), my interest was sparked and i just (without googling) threw this out in about 7 minutes:

i = 0

while i < 101:

if i % 3 == 0 and i % 5 == 0:

print "Fizzbuzz"

if i % 3 == 0:

print "Fizz"

elif i % 5 == 0:

print "Buzz"

else:

print i

i +=1

OK, no function or anything more fancy but a working loop… ;-)

Thanks for showing me this test – it gave me a small problem to solve and tickled my curiosity.

There’s some question that will catch out any programmer. An interview asking for some domain knowledge that I don’t have would scare me.

Is the modulus operator domain knowledge? I smirk at the concept. But if an interview asked me to do some bit operations with ~|& etc. it would take me longer than five minutes, and if I had to use ^ (XOR) I’d probably be screwed.

In reality modulus is pretty uncommon. Still I guess there are other ways to calculate it. Divide by three, and check to see if the answer is an integer?

Mostly fizzbuzz tests for the ability to follow a spec and write non-typical code, so it’s great. I’d push candidates to use an undefined function (eg. `multiple_of_three(x)`) should they not know modulus. As knowing modulus is not the point.

Here’s a question… Since the program requires no input, what would you have done if someone gave you:

Console.WriteLine(1)

Console.WriteLine(2)

Console.WriteLine(“Fizz”)

Console.WriteLine(4)

Console.WriteLine(“Buzz”)

…

Console.WriteLine(14)

Console.WriteLine(“FizzBuzz”)

Console.WriteLine(16)

…

Console.WriteLine(98)

Console.WriteLine(“Fizz”)

Console.WriteLine(“Buzz”)

It isn’t incorrect according to the requirements, it is more CPU efficient (no calculations or if comparisons), but not flexible or algorithm efficient.

Just curious what would be your response….

-Tim

The only tricky part for me is the “multiples of”. Conceptually, I pretty much instantly knew what logic was required to get the result (and my result was very similar to the one given above, perhaps without the separate subroutines). But – the syntax for the multiples of piece would have almost certainly tripped me up. I knew there was syntax for it… I remembered there was a percentage sign involved and that you’d be testing the remainder… I could even have told you that the keyword “MOD” is used in VB… but I couldn’t have told you which number goes first. Would I have passed the test or not? In a real-life programming scenario, I would have googled “multiples %” and had the right formula in 3 seconds. I had the logic down, but my memory for naming conventions and syntax is pretty much crap unless I’m constantly using them.

This is the kind of thing that always gets me when I’m confronted with any programming test, and it’s always frustrating. Also, I absolutely despise written programming tests because when I write code for real I’m always re-arranging and deleting to make things better organized or more efficient (e.g. I might have written out the whole above solution without function calls, then halfway through realized that I’d rather use function calls and rewrite it with those calls), and having to do that on paper really throws me off and makes me nervous.

Here’s mine:

1.upto(100) { |i| o=””; o+=”Fizz” if i%3==0; o+=”Buzz” if i%5==0; o=i if o.empty?; puts o }

An other guess of why some programmers can’t answer the FizzBuzz, is that as you said most people find the problem “to easy to be real !”, so they start thinking “Where is the catch ?!”.

One thing that can help interviewrs is to start the problem with something like: “I have an easy test for you, just to make sure that you can code *smile*, write some code that … “. I believe that in an interview, most people are using just half there potential, because of all the stress, the questioning …, so it’s the role of the interviewer to help them feel confortable if he really want to be fair, sadly most interviewer don’t do it (even can’t do it).

Now for the sake of this argument, imagine that you have an interview with Google, and they asked you to answer the FizzBuzz problem and just for the sake of argument, let’s say that you don’t know already the problem, and let’s say that they give you one hour to solve the problem :), what do you think you reaction will be ? :)

So the point that i want to make, is that: “You will get result, depending on how you’re asking the question.”

Here’s the test I typically use and give candidates 90 minutes. No-one (except myself and another developer) has ever completed it successfully, but it matters more how far they get and how they explan what they’ve done and how they would finish it – note that it’s for a Microsoft-stack job, but the lest of allowed languages could be expanded:

Build a calculator using ASP.NET, Winforms, JavaScript, WPF or Silverlight.

It should support buttons for 0-9, +, -, *, /, =, C & CE.

It should operator like a normal calculator in terms of digit entry and result display.

It should comply with operator precedence.

Optional requirement – support multi-level parenthesis.

:-)

Timothy, if a candidate offered that answer to me, I would have told him his code should have been less than 30 lines. Plus, a solution like that might take more than 10 minutes to write out.

Thank you for the essay on your experience using FizzBuzz. The title of the article suggests that FizzBuzz sorts good programmers from poor programmers. As I read the essay, I was hoping to see evidence showing that people who can write the FizzBuzz program are productive programmers and that people who do poorly at the FizzBuzz challenge are not productive at programming. Instead, the evidence presented shows that 40% of candidates can whip out FizzBuzz in under ten minutes and 60% cannot. If you just need a simple, arbitrary way to sort candidates, you could more easily have asked your favorite pseudo random number generator to generate an integer between 1 and 5 for each candidate and then only interview the candidate if the random integer was 1 or 2. You would get a 40% passing rate on the RandomNumber challenge, and based on the evidence you presented, the RandomNumber test would be just as useful for sorting candidates as the FizzBuzz challenge. It does seem that FizzBuzz checks to see if people can reason through a problem and break it into components. These skills are essential for effective programming, but you have not verified the connection between good programmers and FizzBuzz performance. For one thing, interview conditions tend to make otherwise productive people frazzled and sloppy.

Cool test, I hadn’t heard about it before. It’s even better knowing how it is being used—so that these don’t appear as arbitrary from across the table ;-)

Anyways, here’s my humble contribution hammered out in a couple of minutes:

https://gist.github.com/4079605

I admit, I didn’t type it on paper. *But* this was my first attempt, no retyping or previous runs of the code. So it’s almost as if I copied what I would have written from paper without modification into the Python interpreter :-)

If anyone sees an error in my output, let me know.

@Vasi:

Depends, I’ve seen both, but just as an example:

@Sven:

You’re supposed to start at 1, read the spec.

You’re supposed to print “FizzBuzz”, not “Fizzbuzz”, read the spec.

You should have used “elif i % 3 == 0”, your code prints “Fizzbuzz\nFizz\n” for multiples of 3 and 5.

@Timothy — I really like the solution of printing out each number one by one :-)

@Amy — I think in coding interviews it would be acceptable to have ‘pseudo-code’ and you can describe what you want the operator to do, but not have the proper syntax. At least that’s been my experience in the past. So you could use the ‘MOD’ or ‘%’ thing incorrectly semantically/syntactically on paper, but as long as you could describe to me what it is supposed to do and you’re correct, then I’d accept it.

of course, you still think it’s worthwhile to reject people without university education despite these results. you have a simple test. it barely costs you anything. why won’t you give them a try?

Happy to say had to do fizz buzz for my current job, nailed it.

I really like fizz buzz. I learned it at a previous employer. We used it for years with great success. I am amazed how many “programmers” cannot pass this basic test.

I like to take advantage of the simplicity of Fizz Buzz and find out more about how a programmer thinks. I ask them to test and prove their code. Usually they respond with a manual test method. Then I ask them how they would test their code if they were doing 1 through 1 Million? At that point they realize that they don’t have a good separation of concerns including their test logic in the loop. Or, they don’t realize what is wrong, and you know the caliber of developer they are.

So, doing the test with Fizz Buzz you get twice as much for your money.

@Seth: no, the FizzBuzz test is not intended to sort good programmers from poor programmers. It sorts programmers from non-programmers.

Out of curiosity, how many is ‘some programmers’? I’m just wondering what the sample size is here.

40% seems low – when I did tech interviews a few years ago, the FizzBuzz question success rate was more like 80% (though I didn’t have the mercy rule, which saved a few of them); then I’d ask them for a recursive factorial method and the success rate would plummet.

So maybe programmers are, on the whole, getting worse. Or maybe our phone screen was tougher. Or it might just have been luck. Anything less than 100% success among people *applying for a programming job* makes me sad, though.

Seems that if someone isn’t familiar with the % operator (or mod in VB) they should still be able to crank out something like the following to replace it pretty quickly:

…

If (x / 3.0) – CInt(x / 3) = 0 Then

….

I’m surprised at the high failure rate, although I admit when I first heard about the FizzBuzz I thought there was some kind of trick. I think if the question was presented like @Mouad mentioned, with the hint that is not a “trap”, that would make it slightly easier.

Just curious, what is considered a failure? Do you grade it on the output or the style of the coding? Were you looking to see if they added comments to the code? I’m a self-taught developer and I really didn’t have a problem writing this.

@Wolfgang – That approach makes sense to me, but it hasn’t always been what I have encountered when taking a test like this.

It’s like when I was back in school. In CompSci 1, we had to take written tests about syntax, and I’d struggle to get a B, but my friend always got an A. In CompSci 2, it was all about creating actual, working programs and I aced those easily but the same friend who got an A in CompSci 1 struggled and was constantly asking me for help. It’s the logic and the method that’s important, not the syntax, and tests that focus on syntax more than logic are going to get you programmers who are great at memorization, but they won’t actually sort those who can program from those who can’t.

for(i=1;i<101;i++)alert((i%3?'':'Fizz')+(i%5?i%3?i:'':'Buzz'))

.: We didn’t filter out anyone without a university or college education. It’s just that all the candidates happened to have that level of education, and all studied programming.

By the way, keep those comments coming! I’ll answer any questions posed in the next article.

Your solution does not impress me. Divide is more expensive than adds, used to be.

What gets me is how many do not know a C/C++ switch() statement is not the same as if-then-else’s. Switch() statements are done with a jump table.

@EricHill Less than 30 lines of code wasn’t a requirement of the spec. That’s one problem with a test question like this. As a programmer, I could use the very question and their response to my answer to decide if I wanted to work for the company in the first place. If they have requirements like “less than 30 lines of code” but can’t be bothered to let the programmers know, it’s probably not a well managed company in the first place. On the flip side to that, of course programmers should have the ability to decipher a set of requirements and make logical inferences, such as keep the code robust.

On another note. I could answer: In my favorite programming language, called TimCode which I made myself, there is a function defined in the language for FizzBuzz, just in case it was ever needed. Thus:

FizzBuzz(1, 100);

Timothy: To your earlier comment, the one with all Console.WriteLine() statements — well, I’d give the candidate who submitted that solution some points for chutzpah. I’d also give him or her additional points for the CPU efficiency argument; we are a mobile dev shop after all, and battery efficiency is something we have to think about.

That being said, I’d have asked the candidate “Could you implement it another way?”

@Joey Yeah, I would do the same if I were the interviewer. I like the idea of “Rube Goldberg”-ing it, but I wouldn’t have the “chutzpah” to actually give that answer in my interview. At least, not if I cared about the job.

I had somewhat the same solution except I skipped the if statement looking for % 3 && %5. However I wasn’t sure on what the format looked, like if there were spaces or if you needed to output the number.

var str; for(var i = 1; i<101; i++) { str+= i.toString(); str += i%3==0?"Fizz":""; str += i%5==0?"Buzz":""; if(str.substring(str.length-1, str.length) != " ") { str += " "; } }

As a culture test you could give extra points for even knowing what the FizzBuzz test was so question one could be what is the FizzBuzz test for? This culture question then eliminates those who might otherwise claim to not know FB but then ace it.

My personal favorite is the Fibonacci test as it has a few layers. First is that the person has enough math to know what Fibonacci even is (Dock a few points and then explain). Next the Fibonacci is a tiny bit harder to think through than the FizzBuzz and lastly Fibonacci has the potential for overrun so you can see what they plan on doing with that. But with the high elimination of the FizzBuzz it looks like a great place to start. If you choke on FizzBuzz then you will pop a gasket on Fibonacci.

I find the problem embarrassingly trivial. If you’re interviewing “programmers” who have trouble with this, you are not doing good initial screening. This test is wasting everyone’s time.

I prefer to have an applicant bring in a piece of running code they wrote (and run it) and then explain the spec they were given, their solution process, and the result.

Giving programming tests in interviews is bullying in the most pathetic passive-aggressive way possible. The programming field is full of dbags and this type of interview test is an example of what happens when said dbags are given the chore of interviewing.

A couple months ago I wrote it in Erlang just because.

I’m a big fan of programming test for programmers (duh, that’s the job, right?), but I think the reality of “programmers who can’t program” is highly overstated. It’s a complex problem and no one has really truly figured out the right way to do to interviews.

You are trying to reduce false-positives, of course, so tests of some sort are good ideas, but is this really optimal in today’s tech employment market? There are too few developers for too many open jobs. That doesn’t mean you want just a body in a seat, but it also doesn’t mean you should keep interviewing the same way that filters out a lot of people. Technical people hate interviewing, and we often don’t give it the attention it deserves, instead taking the easy way out rather than investing additional time into improving the process and looking deeper at the available candidates.

I agree that FizzBuzz should be something that anyone who has been coding more than a week should be able to solve on the spot. Hell, if they can explain how to do it using Excel that’s a great step. But if someone can’t and has been developing for a while, what does that really say? That they have been faking it? I somehow doubt that, although that does happen. Chances are higher than they’re just having a bad brain day (surely every single developer here has had those frequently?). If they have other qualifications that doesn’t seem to mesh with the result of that test it is likely worth your time to look a little deeper.

Do they have any open source projects available that you can look at? Ask them to explain exactly what they did on their last project; where they told to implement X, or did they have to figure out what X was? How much freedom did they have? Can you tailor a programming problem that’s specific to their previous experience and domain (e.g. I get asked a lot of bit manipulation questions from old-timers because they know I did a lot of that, too)?

Ask them if they would be more comfortable with a more complex problem but that is a take-home test that they have a few days to solve. Customize it for them so they can’t just get the answer off the net. It doesn’t take much time for you to do this and it can tell you a lot.

If the # of folks applying to your position is so high you can ignore this, then by all means do so, but at least in the several cities I deal with, we have a shortage of applicants to even interview in today’s market. When we do find anyone that looks good, we invest the time to interview them throughly rather than a quick screen. You can usually spot the fakers without any coding test whatsoever, so ask yourself what your coding test is really telling you.

A couple of anecdotes from my experience in the past 25 years… the worst programmers I’ve ever worked with absolutely smoke every programming test I gave them. And, my favorite quick screen programming test is to give them a string and have them count how many times each letter appears in the string. Remarkably, I get less than a 20% success rate on that, and I’ve discovered all that is really telling me is if they’ve been exposed to C-style string handling or not: a remarkable number of talented developers simply haven’t had a need to do that work frequently so they get flustered by it in an interview.

So my bottom-line suggestions: a) realize that everyone has bad interview days and it’s worth being more careful in today’s job market; b) tailor programmer tests to their background if you really want to know about them; c) don’t hire assholes just because they smoke programming tests; d) do not, under any circumstances, ask someone to reverse a linked-list.

I had not been so certain about FizzBuzz tests in the past (that they be too easy, etc.). Now I know that it would be too easy for me simply because I am already experienced in the field.

It is an exceptionally good test, because it can be administered in 10 minutes and if the person cannot write pseudo code to answer that question, they are not likely to be of any value as a programmer.

I would also be able to, as an interviewer, use FizzBuzz as a tool to understand where applicants stack up compared to each other. I would not establish a maximum by lines of code (as suggested above). However, if there were an applicant that noticed that in order to fulfill the spec, it is not necessary test for i % 3 == 0 and i % 5 == 0 twice for each iteration, you know that you have a standout in your mix.

If a more senior applicant’s code does things that reach beyond the spec in a good way (e.g. removing dependency upon magic numbers, allowing inputs to be configurable, breaking out subroutines), then that would help as well.

I feel like a lot can be learned by reading the code of a possible applicant, even for a very simple test like this one. It gives you a lot of insight into how their brain works, and whether when they are given a simple problem if they manage to write code that’s uglier than it needs to be, cleaner than others, more modular than others, or something adequately average.

I’d have done it like this:

var w=”;

for (var i=1;i<101;i++){(i%15==0)?w='FizzBuzz':(i%5==0)?w='Buzz':(i%3==0)?w='Fizz':w='';console.log(i+' '+w);}

Console.WriteLine(1)

Console.WriteLine(2)

Console.WriteLine(“Fizz”)

…

this isn’t efficient

Console.Write(“1\n2\n\Fiz…”); is much faster

each write/writeline has a very high cost, doing it in one statement is much more efficient

Great write up on Fizzbuzz.

In the last 4 years my company has hired over 30 developers and each of them went through that test. I stopped sitting in all the interviews after a while but for as long as I did, I kept the solutions in one of my desk drawer. It amazed me to see how many different solutions there is to this simple problem. One of my “when-I-have-time” project is to publish on a website all the working solutions “as-seen-in-the-interview”.

I had several cases of developers sent by reputable IT services company who completely froze in front of this amazingly simple test. The hardest thing then is to decide what you do, do you just end the interview after 20/30′? Or keep going knowing you wont hire them? Some of my coworkers just stopped the interview when the Fizzbuzz was a big fail (or when someone started talking about HTML6…) but for some reason I could not. Never changed my mind later though.

thomas

It is surprising to see how well you can filter out the bad candidates with a simple test like this. At our company, we require candidates to write a program in any language that calculates the average of an array of integers. I always find it embarrassing to bring the test up in the interview, but I’ll stick to it!

I would have stared at the page for a while, smiled and proudly handed you my implementation of FizzBuzz in Whitespace.

@iopq Nice. You win. :)

I scanned through the comments, and didn’t see this mentioned, pardon me if I missed it.

It seems to me inefficient to check !mod3 and !mod5, then turn right around and check them both again. You seem to think this is a good thing, because you highlighted “most common case first”, but that “most common” case is already doing both of the comparisons you’ll have to do again if you fall through.

I haven’t read the spec closely (saving myself for a live interview, naturally), but it seems to me it would be better to check whatever makes you print “Fizz”, then whatever makes you print “Buzz”, then fall through to the “most common case”.

Am I wrong?

You don’t need modulus. This took me about 10 minutes, but I did choose a shit language:

#include

int main(int argc, char **argv)

{

int i;

int i5 = 0;

int i3 = 0;

int fizz;

int buzz;

for (i = 1; i <= 100; i++) {

i3++; i5++;

fizz = 0; buzz = 0;

if (i3 == 3) {

fizz = 1;

i3 = 0;

}

if (i5 == 5) {

buzz = 1;

i5 = 0;

}

if (fizz && buzz) {

printf("FizzBuzz");

}

else if (fizz) {

printf("Fizz");

}

else if (buzz) {

printf("Buzz");

}

else {

printf("%d", i);

}

}

}

For what it’s worth, I know non-programmers who can solve FizzBuzz. The objections that it rejects perfectly qualified candidates amuse me. Qualified for what?

This isn’t the type of problem that takes a PhD in CS to solve. This is the type of problem that takes a high school level programming class to solve (if even that). If you feel like you’re a competent programmer but you’re failing interviews over questions like this, I’d suggest going through a bunch of programming exercises like this to wake up the relevant parts of your brain: they may have atrophied from lack of use doing too much repetitive grunt work. There are multiple books around with interview-style exercises harder than this one, or you can find some free CS classes at your level to enroll in.

Don’t do it to memorize the answers to a bunch of questions—interviewers ask all kinds of different questions anyway. Do it to get better at doing programming exercises in general. Do it so you can stop sweating over technical interviews. Do it so problems you haven’t seen before don’t scare you because you know you can handle them.

Seriously, just practice. If you’re at all competent, there’s no reason problems like FizzBuzz should be weeding you out.

I tried the joey’s algorithm, it was too complicated. I found this so much more trivial:

map(lambda x:(x%3 and x%5) and x or (x%5 and not x %3) and “fiz” or (x%3 and not x%5) and “buz” or “fizbuz”, range(1,31) )