Hello from the Arc of AI conference, happening as I write this from Austin, Texas! I’m currently enjoying a pre-new-job “vacation” in true geek style by attending, speaking at, and playing accordion at an AI conference. Yeah, that tracks.

Yesterday (Tuesday, April 14, 2026), Arc of AI’s ringleader, Venkat Subramaniam, gave one of his “big picture” talks with the insight, warmth, and humor that are his stock in trade. I took notes and pictures, my phone took a recording, I used an LLM to pull it all together, and the end result are these notes from Venkat’s post-lunch keynote, titled Influencing the Irrational AI-Clouded Minds.

Venkat’s session went beyond concerns about code and into the idea of protecting our field from the “breakneck speed” that threatens to break our collective necks. Right now is both the best of times and the worst of times. As tech professionals, we find ourselves on a daily rollercoaster of twists and turns, bouncing between fascination with new capabilities and a very real fear of potential (career? existential?) threats.

Venkat noted that while AI is an incredible tool, it’s currently functioning as the most powerful “vomit engine” ever created. It can puke out more code than you can handle, but that doesn’t mean it’s code you should trust.

It takes time for tech to catch on and fit into our lives

As the saying goes, history doesn’t always repeat itself, but it often rhymes. Venkat reminded us that technology maturity is rarely instantaneous. We often take for granted that the world wasn’t always “plugged in.”

- Electricity: First made available 1878, it was initially very expensive and difficult to generate. It took until the 1940s for the majority of U.S. homes to get electricity, which was a 60-year journey.

- Bicycles: Designs emerged in the 1830s (and wow, were they ridiculous and downright dangerous), but they didn’t become commonplace until the 1890s. 60 years again.

- Cars: Emerged in the late 1800s, and took until the 1930s for 60% of U.S. households to own one. 60-ish years.

- Flight: I was in the “keener row” (Canadian slang for the front row of a classroom) at the keynote, and Venkat knows where I live, so he called on me and asked if I knew where and when the first commercial flight took place. I didn’t know.It turns out that it happened in 1914, cost $400 ($13,000 in today’s money), and was a 22-minute jaunt from Tampa to St. Pete. It would take until 1972 for 50% of Americans to have flow

There is something both magical and sobering about that 60-year window. Every technology starts out shaky, costly, and unsafe. AI is no different.

Redefining AI: It’s Not Intelligence

One of the most grounding points of the keynote was the definition of AI itself. This is something that Venkat brought up at his talk in Tampa back in December.

We admire “intelligence” as original thinking and innovation. What AI does often looks more like what we call “plagiarism” in school:

“I call AI as Accelerated Inference, because that’s what AI really does. AI is an inference engine, not a machine [of intelligence].”

AI analyzes patterns based on available data. If the data’s garbage, the inference is garbage. And let’s be honest: we’ve trained AI on the code we’ve been writing for decades (and hey, it’s not all good). We’re effectively feeding it garbage and being surprised when it shows us what it’s got.

Where AI shines, and where it ends up where the sun don’t shine

Venkat shared a powerful anecdote about an expert C++ developer struggling to write automated tests for an enormously complex library. He suggested handing the tass over to AI. The developer, after some coaxing, did that, and the AI generated a suite of tests with extensive mocking.

But the initial result didn’t even compile. Venkat began to think that his suggestion was a mistake…until the developer took a closer look at the test code.

Upon closer inspection, the developer noted that while the tests didn’t work, they were close to working. He said that he could get them up and running in two hours. It turned out that it took even less time than his original estimate.

The developer came to the realization that what the AI did in seconds would have taken him three months of full-time effort to figure out. AI got the developer 70% of the way there, but it required a developer with enough expertise to do the remaining 30%.

AI strengths vs. weaknesses

I need to take a moment to thank Gemini for turning my hastily-typed notes into the table below, which summarizes AI’s strengths and weaknesses, as enumerated by Venkat at his talk:

| AI is Great At… | AI is Terrible At… |

|

Handling Cognitive Load: It doesn’t have a “mind” to get overwhelmed by complex, intertwined code. |

Reliability: It cannot yet create reliable code or documentation you can release without a human check. |

|

Detecting Issues: It can snap-analyze a design and find bugs or architectural flaws like a missing game loop. |

Contextual Truth: It can be “unbelievably smart” while being factually wrong, as seen in the Lua |

|

Generating Ideas: It is a powerful tool for ideation where correctness isn’t the primary burden. |

Correctness: It might give itself a 9/10 for correctness while admitting its quality is poor due to “mutating sin”. |

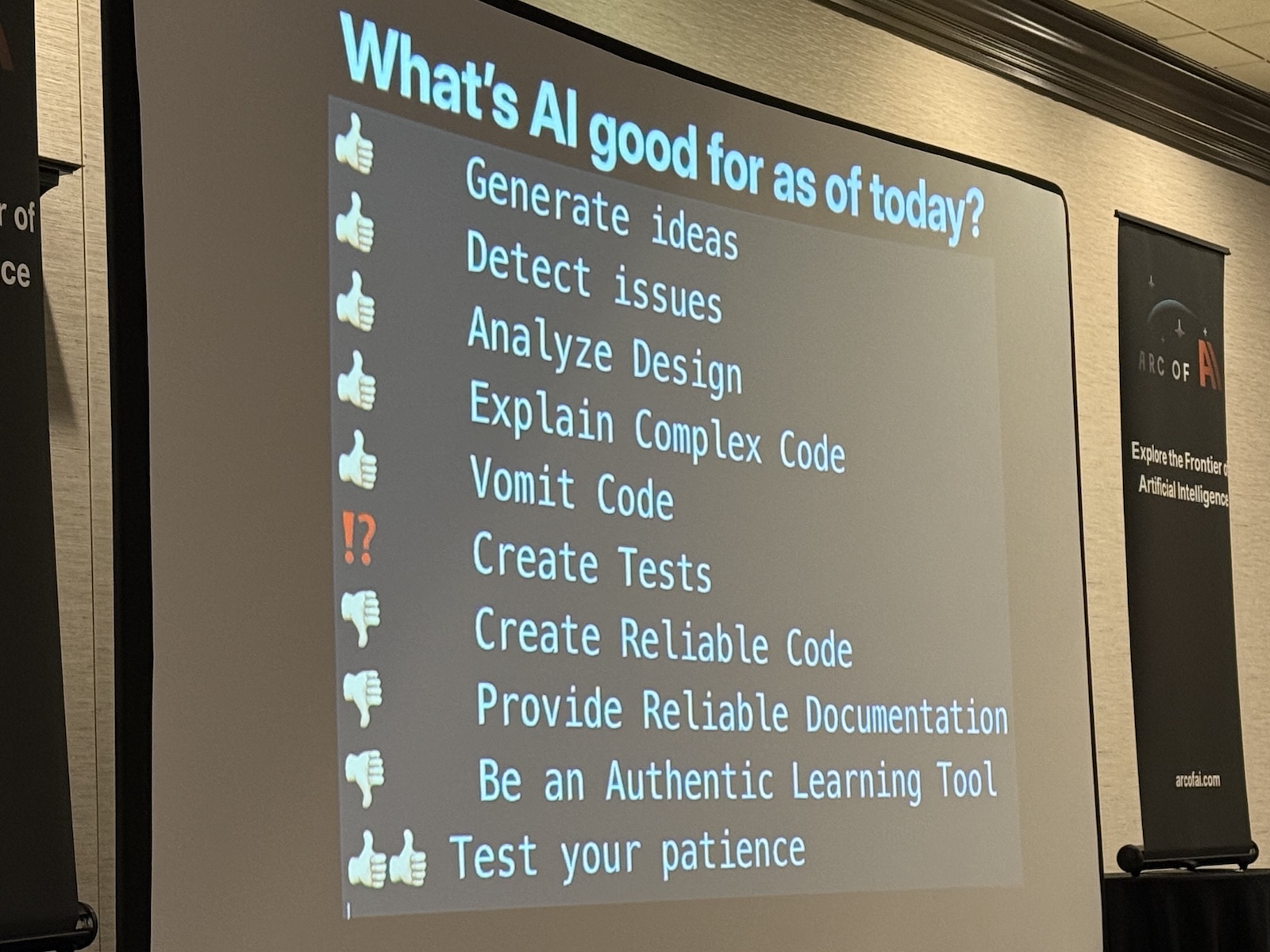

He also presented this slide:

- Generate ideas (thumbs up)

- Detect issues (thumbs up)

- Analyze design (thumbs up)

- Explain complex code (thumbs up)

- Vomit code (thumbs up)

- Create tests (maybe)

- Create reliable code (thumbs down)

- Provide reliable documentation (thumbs down)

- Be an authentic learning tool (thumbs down)

- Test your patience (double thumbs up)

The “Vasa” lesson: You can disagree with physics, but it bites back

To illustrate the danger of “irrational AI-clouded minds” in management trying to mis-apply AI, Venkat pointed to the Vasa, a Swedish warship built in the 1600s. The King of Sweden, powerful and arrogant, demanded a massive, overly-ornate ship. The king understood the power of bling, but not trivialities such as “seaworthiness” and “center of gravity”.

The shipwright didn’t have the courage (but an excellent sense of self-preservation) to tell the King he was wrong. The outcome was predictable: the ship sailed only 1,600 yards before sinking, where it stayed for 300 years until it was raised, restored, and now resides in a museum in Stockholm as an (admittedly beautiful) object lesson.

In 2026, we have “Kings of Sweden” at our workplaces, and they want AI solutions at any cost for the same ornamental reasons as the Vasa. But while you might disagree with the “physics” of software development, they’re often as hard to fight as real-world physics. If the cost of failure is high (loss of data/money/life) you can’t blindly jump in and build an AI Vasa.

Programming is thinking, not typing

Venkat pointed us to Wikipedia’s list of obsolete occupations. Will “programmer” end up on this list of over 100 obsolete jobs, like the town crier or the leech collector?. He argues the opposite:

- Our job isn’t about typing characters; that is the least enjoyable part.

- We’re thinkers, not typists. We write applications in our heads, not on the keyboard. The keyboard’s just a user interface.

- Compilers didn’t kill development; they accelerated it. AI will do the same.

The real threat cognitive decline. The level of abstraction takes us far from the code that we spent time developing hard-earned critical thinking skills. If we lose the ability to think critically because we are delegating our thinking to machines, we are in trouble. As Venkat put it, the “illiterate” of the future are those who cannot think critically.

New rules of engagement

So, how do we handle the “Black Swan” event of AI?. Venkat suggests we look to the rules recently laid down by Linus Torvalds for the Linux kernel:

- No AI Sign-offs: AI agents cannot add “sign-off by” tags.

- Mandatory Attribution: It must be clear if code was assisted by AI.

- Full Human Liability: The human submitter bears 100% responsibility for bugs, security flaws, and license compliance.

“Don’t trust and verify the heck out of it” is the new norm.

A framework for success

To remain relevant and responsible, Venkat outlined a five-step process for working with AI:

-

Understand the Problem: Don’t jump to AI immediately. Take the time to grasp the requirements.

-

Ideate: Spend time thinking about possible solutions and their consequences.

-

Activate AI: Only after ideation should you engage the tool.

-

Iterate: Work through the solutions provided.

-

Evaluate Critically: Verify everything before it goes anywhere near production.

We can’t go faster with ignorance. We need competency to gain speed and protect our reputations. AI is a powerful tool, but in the hands of a fool, it’s a dangerous one.

Let’s stop the fear-mongering. There is real work to be done, and it requires our minds more than ever.