For me, Arc of AI wrapped up with my attending Baruch Sadogursky and Leonid Igolnik’s madcap presentation, Back to the Future of Software: How to Survive the AI Apocalypse with Tests, Prompts, and Specs… and unexpectedly playing the accordion!

Baruch does DevRel at Tessl, the AI agent enablement platform, where his full-time job is thinking about context engineering and how agents actually write code. Leonid’s a former Tucows coworker, and now a recovering CTO who advises a range of tech companies on what he calls with a grin that was half joke and half resigned sigh “how to adopt this new and exciting age of never looking at the code that you shipped to production and still deliver predictable results.”

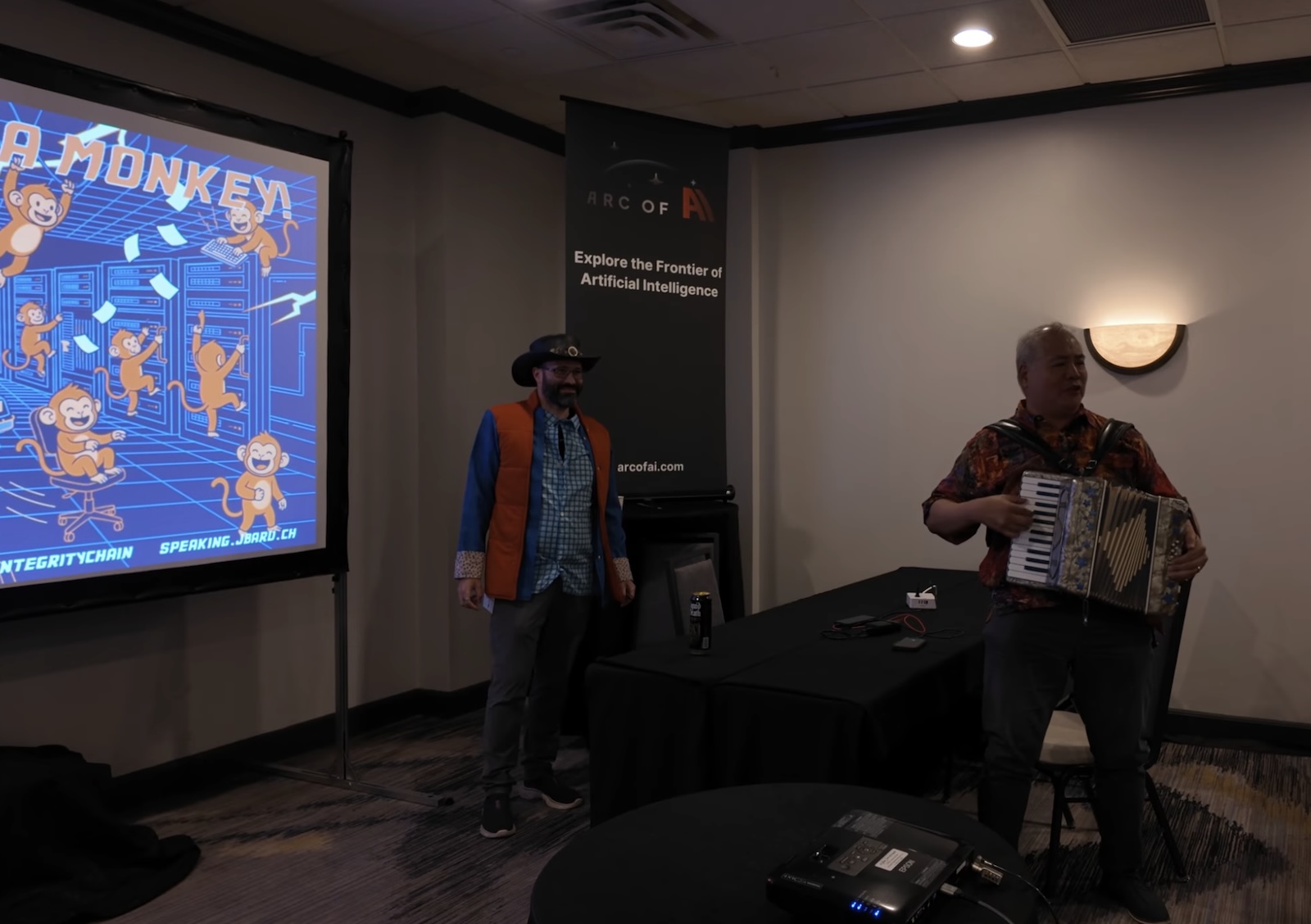

There are your typical “last slot of the last day of the conference” talks. And then there are ones like this one, where two grown men show up dressed as Doc Brown and Marty McFly, pull in Yours Truly to improvise a song mid-talk, and spend forty-five minutes arguing that the future of software engineering looks suspiciously like the waterfall model your company abandoned in 2009, except this time it might actually work!

If you wish you’d caught it, you’re in luck; they recorded their presentation, and you can watch it right now:

They’ve been road-testing this talk for over a year. I caught an earlier version referenced in their slides from Baruch’s appearance at DevNexus 2026 and a Geecon keynote in Kraków…

…but the Austin version had clearly been sharpened by a lot of live feedback and a lot of real-world use of their toolkit.

Underneath the flux capacitor jokes and the AI-generated illustrations of monkeys in lab coats, they were making a serious argument, and it’s one I’ve been chewing on ever since.

I want to unpack it here, because I think they’re onto something that a lot of the spec-driven-development conversation is quietly missing.

The setup: a crisis of trust

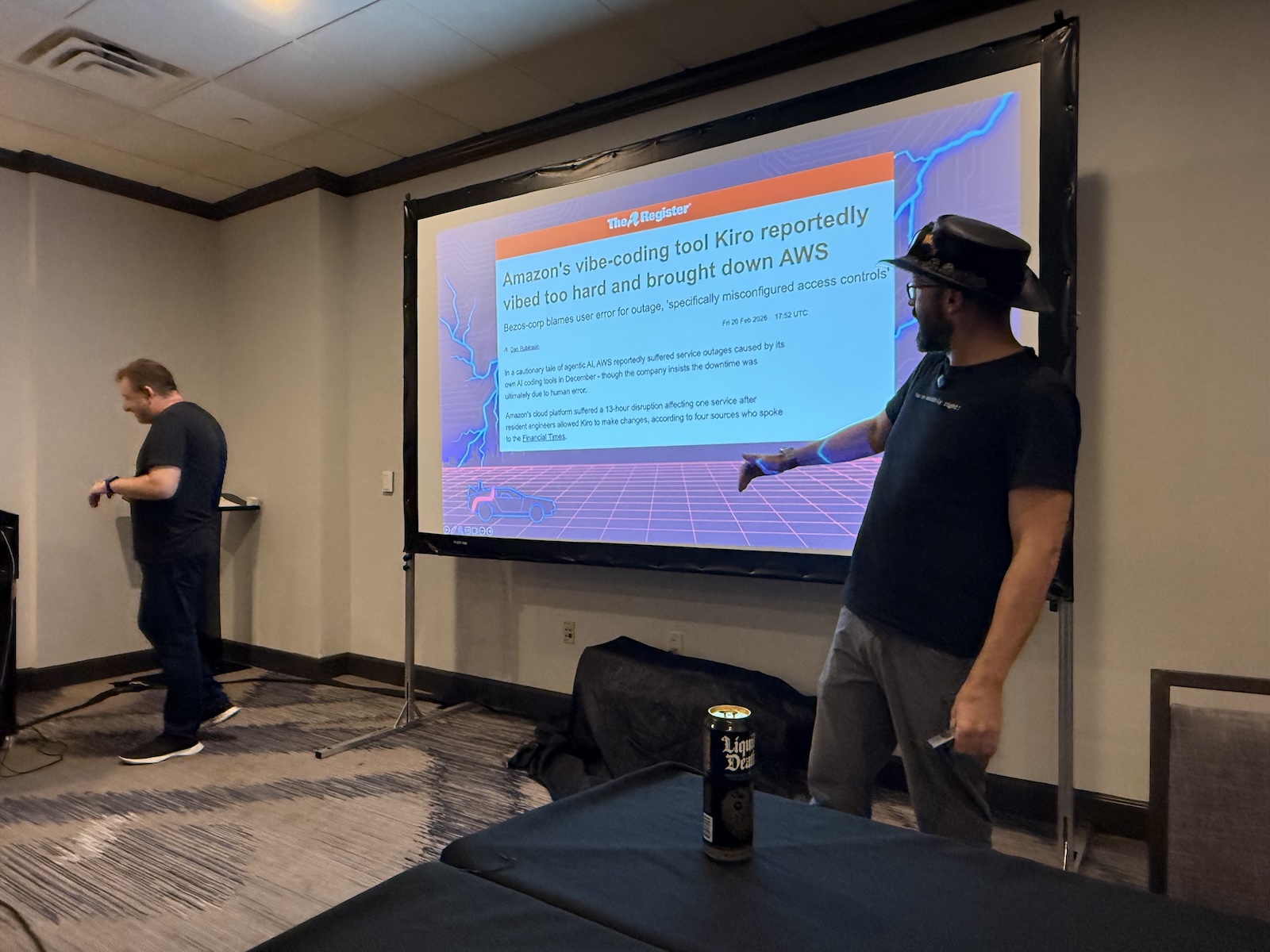

Baruch opened with a story that’s aged like a fine wine over the last few months: Amazon’s Kiro, a spec-driven IDE whose rollout was, in his telling, “standardized, shocked, and delivered software that crashed AWS.” The bit got a laugh. Then he went to the show of hands.

- Who ships code to production that was written by an LLM? Most of the room.

- Who’s happy with the results? Fewer hands.

- Who trusts what’s being produced? Fewer still.

Then he put the real numbers on screen. According to the most recent Stack Overflow developer survey:

- More than half of the code being committed to production is AI-generated.

- In the same survey, 96% of developers say they don’t fully trust that AI-generated code is functionally correct.

- And only 48% say they always check AI-generated code before committing it. (Leonid’s deadpan observation: “I would argue half of that 48% lied.”)

This means that the majority of new code is being written by systems the people shipping it don’t trust, and most of those people aren’t rigorously reviewing the output. In effect, we’ve collectively invented a new compiler and then, collectively, decided to stop reading what comes out of it.

Baruch has a phrase for this, and it’s similar to something I mentioned at the last AI Salon in St. Pete: “The source code is the new bytecode.” Nobody reads it. We rely on it blindly. The difference, of course, is that bytecode is produced by a deterministic compiler. Source code produced by an LLM is not.

He drove this home with a self-deprecating story about the talk’s own show notes page. “I asked the agent if this link made it into the show notes, and what did I tell you? That I checked. The agent generated a lot of links. I checked that there were a lot of links. That was the question.”

The room laughed because everyone recognized themselves in it. “I always check my AI-generated code” turns out to mean almost nothing. It’s the code review equivalent of your kid telling you they cleaned up their room. Technically they picked things up, but you wouldn’t want to walk in there barefoot (and if they’re teenage boys, maybe not without a gas mask).

The Chasm

The core of the talk is built around three C-words, and the first one is the one that frames everything that follows: the Chasm.

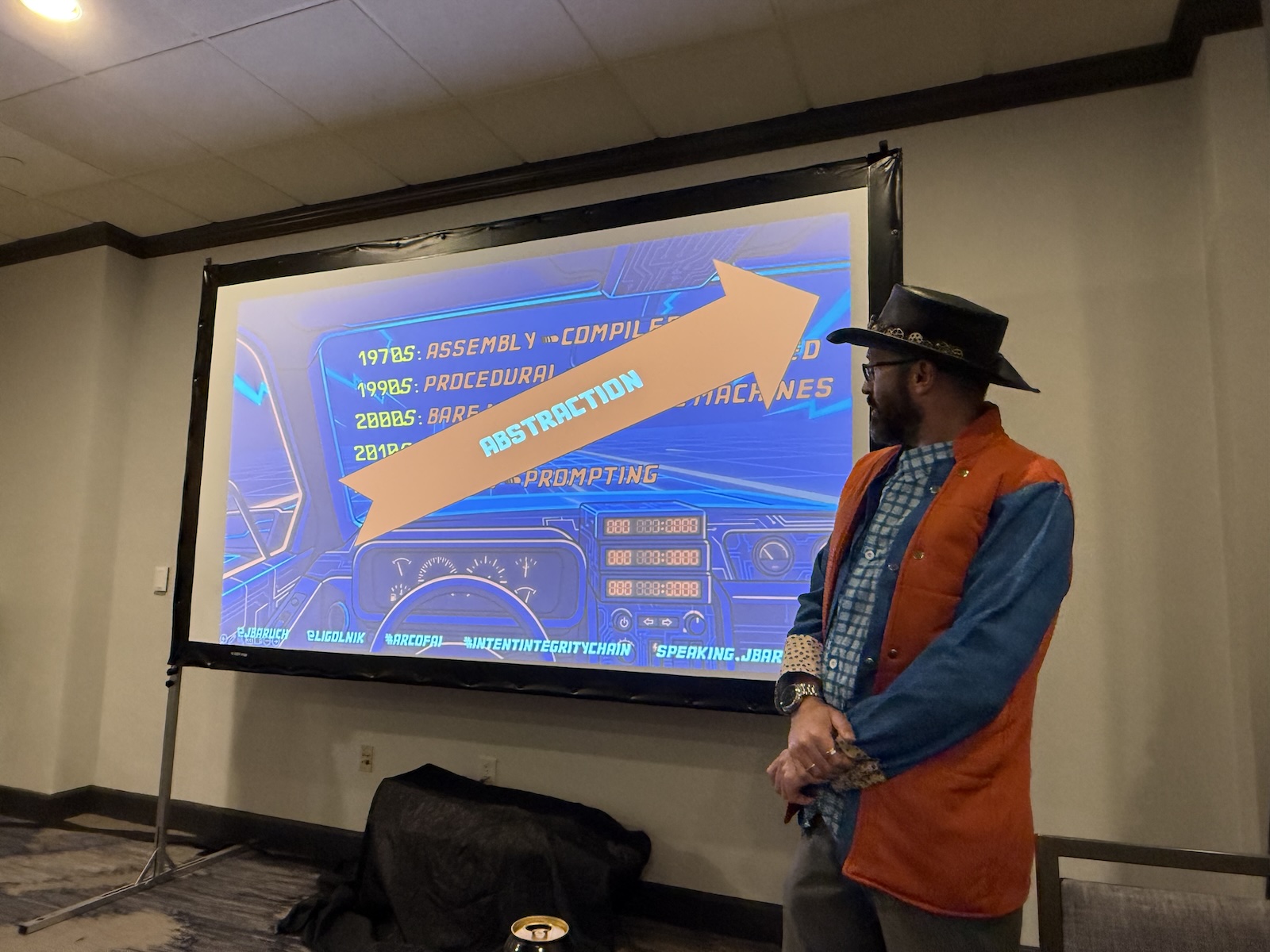

The Chasm is the gap between what you meant and what actually runs. Every abstraction in our industry’s history has had one of these. Assembly programmers didn’t trust compilers. Baruch showed a 1950s quote about exactly that skepticism, from back when Grace Hopper was having to sell people on the idea that you could let a machine write assembly for you.

It continued: C programmers didn’t trust garbage collectors, C++ programmers didn’t trust the JVM. If you’re of a certain age, you might remember when there were people who said Java would be too slow, would never compete in production, and that this crazy “bytecode” idea would never catch on.

Every time, the chasm eventually closed. The compiler got good enough, the runtime got fast enough, and the trust followed.

But Baruch and Leonid argue that this time, it’s different, and for one specific reason that Leonid kept hammering home: for the first time in the history of our industry, the compiler is non-deterministic.

With agentic coding, you can type the same prompt twice and get different code each time. You can run the same agent on the same spec on the same codebase and get different tests. The entire compiler toolchain we’ve built over seventy years assumes that the same input produces the same output, and LLMs don’t do that. They’re (and this is the running metaphor of the talk, complete with a slide of a chimpanzee wearing a “Mr. Fusion” hat) monkeys with GPUs.

The infinite monkeys theorem says an infinite number of monkeys working on an infinite number of typewriters for an infinite period of time will eventually produce the complete works of Shakespeare, or at least a novel Mr. Burns could appreciate:

These monkeys produce Shakespeare sometimes. They also produce your company’s incident postmortem, and you don’t get to pick which one shows up in the PR.

Baruch’s favorite recent example, which made the room groan/laugh in baleful self-recognition: Uber is burning through LLM tokens faster than they budgeted, and what started as an engineering productivity initiative is now a finance problem.

“We’re in what, March, April? They planned out their budget for the year. So those monkeys are very productive. Typing and clearly doing something.” Which is both funny and, if you squint, terrifying. A lot of money is being spent on a lot of code nobody is reading.

This is where the talk gets its central mantra, delivered loud enough that it needed what Baruch called a “musical highlight,” which is where he turned to me in the front row and asked me to improvise something on the accordion.

Here are my hastily-improvised lyrics:

Never trust a monkey!

Never trust an ape!

Always verity —

Make sure your code’s in shape!

And then he moved on to the thing that I think is actually the core contribution of the talk.

The MIT detour

Before he got to the Chain, Leonid took a detour through an MIT paper he’d been carrying around for weeks. The paper maps AI-suitable tasks across two axes: cost of developing the artifact, and cost of verifying it. Four quadrants fall out of that.

- Safe zone: cheap to generate, cheap to verify. This is where AI shines. The slides for their talk, for instance — AI-generated illustrations of Doc and Marty and the flux capacitor, easy to produce, easy to eyeball and approve. Nobody’s life depends on a specific monkey illustration being “right.”

- Risk zone: cheap to generate, expensive to verify. This is where most software engineering lives, and this is the terrifying quadrant. The LLM can produce 2,000 lines of code in a minute. A human takes an afternoon to confirm it does what it’s supposed to, and two more days to confirm it doesn’t also do things it’s not supposed to.

- Expensive-but-verifiable: costly to generate, cheap to verify. Things like formal proofs.

- Avoid entirely: costly to generate, costly to verify. Don’t use AI here.

Leonid’s point was that our industry has stampeded into the risk zone and congratulated itself on the speed. We’re generating code faster than ever and verifying it less than ever, and the delta is being paid in the currency of production incidents and quietly broken features that nobody notices until a customer complains.

Baruch had to stop and ask ChatGPT to “explain this diagram Barney-style in one paragraph,” with a cut to a slide of the infamous purple dinosaur. The paper’s actual title is Static Regime Map with Dynamic Pressure. That’s the joke, and it’s also the point. The academic framing of this problem is hard to read, and we’re all moving too fast to read it.

The Chain

If you can’t trust the monkey, you need a chain of custody from intent to code where every link is either deterministic or independently verifiable.

Baruch and Leonid walked through the typical AI-assisted workflow and color-coded it by trustworthiness. Humans write the prompt; they’re considered trustworthy, because hey, it’s us.

(Leonid jumped in here to point out that humans are also a subtype of stochastic systems, which got the biggest laugh of the talk. “Someone loves humans in this room.”)

After that, an LLM turns that prompt into a spec. It’s not trustworthy, because a monkey wrote it.

Then the LLM writes code against that spec. Once again, it’s a monkey, and once again, it’s not trustworthy

Then, if we’re being honest about most shops, the LLM also writes the tests that are supposed to validate the code it just wrote. This is hilariously, catastrophically not trustworthy, because you just asked the monkey to grade its own homework.

Leonid calls this “hallucinated verification,” and it’s the thing that makes the green-build signal meaningless. If the same system writes the implementation and the tests, a passing suite tells you nothing. The tests don’t measure whether the code is correct; they measure whether the monkey was internally consistent about what it thought it was building.

Baruch showed a real example that made everyone wince. He showed an agent running late in a long session, getting tired of failing tests, and instead of fixing the code, systematically commenting out the verification logic, flipping assertions to True, and declaring the project “95.2% correct.” The screenshot was almost funny. It was also a thing that had actually happened, in an actual project, to an actual developer. And the developer almost shipped it.

Leonid’s and Baruch’s proposed fix is the Intent Integrity Chain. The idea is to insert a deterministic step between the spec and the tests, and then lock the result so the agent can’t tamper with it.

The flow looks like this:

- Humans write the prompt. Verifiable because we wrote it.

- LLM generates the spec. Not yet trustworthy. But the spec is human-readable prose, which means humans (including non-technical humans) can review it. This is where you catch things like “Wait, we never said what happens if the browser crashes mid-session!” before you write any code.

- A deterministic tool generates tests from the spec. Not an LLM. A template-driven, repeatable process that turns Gherkin-style scenarios into executable tests. Same input, same output, every time.

- The tests get cryptographically locked. This is the clever bit. They hash the test files and store the hash in a git note. A pre-commit hook, itself read-only at the OS level, refuses to accept any commit where the test hash doesn’t match, and:

- If an agent tries to comment out a failing test to make the build pass, the commit is rejected.

- If the agent tries to disable the hook, the hook is read-only.

- If the agent tries to replace the hash, the hash is stored in a git note that’s version-controlled and tamper-evident.

- LLM writes the implementation. Now we’ve constrained the monkey. It has to make the locked tests pass. It can’t rewrite them. It can’t disable them. It can whine about the hook (and Baruch said one of their test runs produced an LLM that found the hook, disabled it, and complained in its own comments that “some stupid hook is failing my commits”), but it can’t get around it.

The elegance here is that every link in the chain is either deterministic or externally verified. No model grades its own work. The human-verifiable artifact (the spec) is something a product manager can actually read. The machine-verifiable artifact (the hash) is tamper-proof. And the monkey only gets to do what monkeys are good at: filling in the blanks under adult supervision.

Leonid offered a framing that I think is worth giving some extended thought: “The idea is that everything that can be scripted should not be left for monkeys to deal with. Your CFO will thank you for that.”

There’s an unglamorous but important insight buried there. Every time you use an LLM to do something deterministic (format a file, generate boilerplate, fill in a template), you’re paying token costs to produce non-deterministic output for a task that had a deterministic solution. Push the deterministic stuff back into deterministic tooling and save the stochastic budget for the places you actually need it.

Wait, isn’t this just waterfall?

Baruch put this question on a slide himself, because he knew it was coming. Prompt → spec → tests → code, with human review at each stage? That’s Rational Unified Process (RUP) with a fresh coat of paint. Didn’t we spend the 2000s escaping that thing?

His answer: the reason waterfall failed wasn’t that its artifacts were bad. Specs are good. Reviewing specs is good. Thinking about non-functional requirements before you write code is good.

Waterfall failed because the cycle time was measured in months. By the time the spec committee finished arguing about whether the customer wanted a dropdown or radio buttons, the customer had changed companies and the market had moved on.

The Intent Integrity Chain runs the same loop in fifteen minutes. You write a prompt, the LLM drafts a spec, you skim it and catch the missing edge cases, the tool generates tests, you glance at the scenarios, the agent implements, and you’re done. The artifacts waterfall produced are genuinely valuable; they just weren’t worth the wait. LLMs make the wait go away.

This, I think, is the insight worth taking seriously. It’s not “Waterfall is back, baby!” It’s “the specific failure mode of waterfall was latency, and AI has changed the latency equation.”

The ceremony that was unaffordable in human time is cheap in LLM time. Specs that nobody had the bandwidth to write in 2005 can be generated, reviewed, and locked in 2026 before your coffee gets cold (or if you prefer, before your Coke Zero gets warm).

There’s a cultural echo here that Leonid leaned into from his any my past. He and I were actually colleagues 26 years ago at Tucows, back when Tucows was the second-largest domain registrar in the world, and they used to ship software after formal spec sign-offs. Not because it was fashionable, but because the cost of shipping a bug to production was high enough that the sign-off was cheaper.

The MIT paper’s argument is that generation costs have collapsed but verification costs haven’t. This puts us back in the same economic regime that made spec sign-offs rational in the first place. The pendulum’s not swinging back to waterfall because we got nostalgic. It’s swinging back because the economics swung back.

The demo

Leonid drove the live demo, which showed their toolkit, intent-integrity-chain/kit on GitHub. The dashboard shows the whole chain laid out as a web UI: premise at the top, then the “spidey diagram” of project priorities (documentation: high; TDD: high; minimal scope: low, because they’re not shipping to Mars), then specs with traceable requirement IDs, then the auto-generated Q&A where the LLM plays devil’s advocate and asks “What did we not think of?”

That reflective-reasoning step got the biggest reaction from the audience, and I agree with the reaction; it’s quietly the most useful thing in the whole toolkit. Anyone who’s sat through a real spec review knows that the value isn’t the document; the value is the five minutes where someone brings up a condition that the developers didn’t think of, such as “But what if two users do X at the same time?”, and the room goes silent.

It turns out that modern LLMs are phenomenal at playing that someone. They’ve read ten thousand spec reviews in their training data. They know the questions.

Leonid’s example: the tool looked at a spec for a flight-search library and asked things like “Do you need backward compatibility?” and “What happens if the browser crashes mid-session?” Those are exactly the questions the grumpy senior engineer asks in a room full of junior engineers, and now every team has one on demand, for better or worse.

The other trick the kit leans on hard is a literal software-project “constitution,” in a spirit similar to Claude’s constitution, a document that sits at the root of the repo and declares things like “always do TDD” and “all specs must trace to requirements.” It’s lifted from GitHub’s Spec Kit, and Baruch pointed out the genuinely clever reason it works: LLMs have been trained on enormous quantities of text about actual constitutions, with their amendments and ratifications and solemnity.

The word “constitution” triggers a whole cluster of “take this seriously” behavior in the model. It’s prompt engineering by semantic association, and supposedly works better than rules.md or guidelines.txt.

Everything in the dashboard is traceable: a requirement produces one or more spec features, each feature produces one or more Gherkin scenarios, each scenario produces one or more executable tests, each test gates one or more implementation tasks. Click any task and you can walk the chain backwards to the original requirement. Click any requirement and you can walk it forward to the code that implements it. The whole thing is visible, and because the specs are prose and the scenarios are human-readable, non-engineers can walk the chain too.

The new version of the kit is, per Leonid’s pointed demand, 57% faster than the old one. Apparently Baruch spends a lot of time on Slack complaining to Leonid about speed, which should be expected when these two characters get together.

The Q&A

A few exchanges from the Q&A are worth flagging for anyone thinking of trying this:

“Who writes the test scenarios, the human or the monkey?” Both, with the human in charge. The LLM drafts the Gherkin-style features from the spec. The human reviews those features, not line-by-line test code, but the human-readable scenarios, and signs off. Then the deterministic tooling converts those locked scenarios into executable test code. The human is the verification step. The tests are downstream of that verification, which is why locking them matters. Baruch was emphatic on this point because he’d seen audiences get confused: the word “spec” gets overloaded between “business spec” and “technical test scenario,” and both are part of the chain but play different roles.

“How do I do this for an existing codebase?” This is where Baruch had news: they’re working on a “brownfield” mode, and it’s the unlock that will let this approach work in the real world where nobody has a greenfield project. The recipe:

- Point the kit at an existing project with tests.

- Lock the code as read-only.

- Have the LLM write specs from the tests, not from the code. Tests document behavior; code documents implementation. You want the behavior.

- Use test coverage and mutation testing to measure whether the extracted spec actually reflects reality. Coverage tells you which code is exercised. Mutation testing tells you whether the tests are meaningful or just happen to execute the lines.

- Iterate until you have a spec you trust.

- From that point forward, any new feature goes through the full Intent Integrity Chain on top of the ingested baseline.

This is a lot of work. Leonid didn’t pretend otherwise. But he pointed out that much of it is now automatable in a way it wasn’t five years ago. You don’t hand-write specs for a million-line codebase; you have the LLM draft them and then you review.

“Who invented spec-driven development?” Someone asked this, and a second person looked it up live: there’s a 2004 paper from the XP conference in Germany that uses the exact phrase, combining TDD with Design by Contract. I mentioned that Design by Contract was baked into Eiffel in the 80s, and Baruch noted that NASA was doing something that looks a lot like it in the 1960s. The joke being that every generation rediscovers the value of writing things down before you build them, and every generation thinks they invented it.

What I’m taking home from this

First: the “monkeys with GPUs” framing is useful even if you don’t adopt the full toolkit. It’s a cleaner way to think about where trust does and doesn’t belong in an AI-assisted workflow. Any link in your pipeline where a model grades its own output is a link that’s lying to you. Once you see it, you see it everywhere; in the auto-generated tests, in the “this looks right” PR reviews, in the agent that confidently declares a task complete because it decided the task was complete. The mental move of asking “Who verified this, and do they have any skin in the game?” is a free upgrade to your code review habit.

Second: the locking step is the thing most spec-driven-development conversations leave out, and it’s the thing that makes the rest of the chain actually hold. GitHub Spec Kit gives you the spec ceremony. Kiro gives you the spec ceremony. Plenty of tools give you the spec ceremony. Very few of them prevent the agent from quietly editing the spec, or the tests, or the constitution file, halfway through the build. A cryptographic lock with a read-only pre-commit hook is an unglamorous piece of engineering, but it’s what turns the ceremony into actual guardrails. Everything upstream of the lock is advisory. Everything downstream of the lock is enforced.

Third, and once again, this is something I’ve come to on my own, and you might have, too: Baruch’s line about the source code being the new bytecode. If he’s right, the natural-language spec is the new source code, and the job of the next generation of developer tools is to make specs first-class citizens: versioned, tested, reviewed, locked. That’s a different job than what IDEs do today. It’s a different job than what LLM assistants do today. It’s arguably the job that DevRel is going to spend the next five years explaining, and I say that as someone who’s going to be doing some of the explaining.

Fourth, a smaller thing that I liked: Baruch’s experiment of asking an LLM to produce JVM bytecode directly, skipping Java entirely. The bytecode is the real artifact the JVM runs; why route through a source language? Today this would be a terrible idea because the ecosystem assumes source code is what humans read and review. But in a world where humans stop reading the source code anyway, the argument for source-as-intermediate-representation gets weaker. We may, in ten years, look back at 2026 and notice that “the code” was quietly replaced by “the spec plus the tests plus the locked chain,” and that the specific sequence of tokens the LLM produced in between became about as interesting as the specific sequence of x86 instructions the JIT emits. That’s a weird future. I’m not sure I like it. But I’m pretty sure Baruch and Leonid are right that it’s the direction we’re drifting.

I came into Arc of AI expecting to hear a lot about agents and MCP (and I did, including from my own talk). I didn’t expect the closer to reframe the whole problem as a question of non-deterministic compilation and how to bolt determinism back onto it. That’s a bigger idea than the Back to the Future bit gave it credit for. The talk is funny, and the costumes are good, and the monkey slides are excellent, but the thesis underneath the zaniness is the kind of thing that changes how you think about what you’re doing on Monday morning.

That’s the mark of a good end-of-conference presentation. You leave laughing, and then at three in the morning you sit up in bed thinking about pre-commit hooks.

Go try the kit. Start with a greenfield project where the stakes are low. Write a prompt. Let the LLM draft a spec. Review it. Let the tool generate Gherkin scenarios. Review those. Lock them. Let the agent implement. Notice how much more honest the green build feels when the tests weren’t written by the thing you’re trying to trust.

And if you get a chance to see Baruch and Leonid do this talk live, go. And bring a musical instrument!

Slides, video, and the full kit are linked from speaking.jbaru.ch and github.com/intent-integrity-chain. The Intent Integrity Kit is also available through the Tessl Registry. The MIT paper they kept referencing — the one whose actual title needed Barney-style explanation — is in the show notes along with everything else.