I caught the Fundamentals of Software Engineering in the Age of AI workshop yesterday at the Arc of AI conference’s workshop day, led by Nathaniel Schutta (cloud architect at Thoughtworks, University of Minnesota instructor) and Dan Vega (Spring Developer Advocate at Broadcom, Java Champion).

Nate and Dan are the co-authors a book on the subject, Fundamentals of Software Engineering, and they’re out here workshopping the ideas with developers who are living through the same AI-saturated moment we all are.

Fair warning: this post is long. The session was dense, the conversation was good, and I took a lot of notes.

Here’s part one of several notes from the all-day session; you might want to get a coffee for this one.

The opening thesis: giving someone a nail gun doesn’t make them a carpenter

Nate opened with a confession: he’s not handy. At all.

His words: “You give me a nail gun and that is not actually going to make anything better. The cat’s gonna have a nail in its tail.”

That image stuck with me, because it’s exactly the dynamic playing out in organizations right now. Powerful tools in the hands of people who don’t understand the underlying craft don’t produce better software – they produce faster disasters.

Both Nate and Dan were quick to acknowledge that yes, things changed. Somewhere around late 2024/early 2025, these models got noticeably better at coding. Neither of them is dismissing that. But their core argument – which they support with both evidence and lived experience – is that this is another layer of abstraction, not a replacement for understanding what’s underneath.

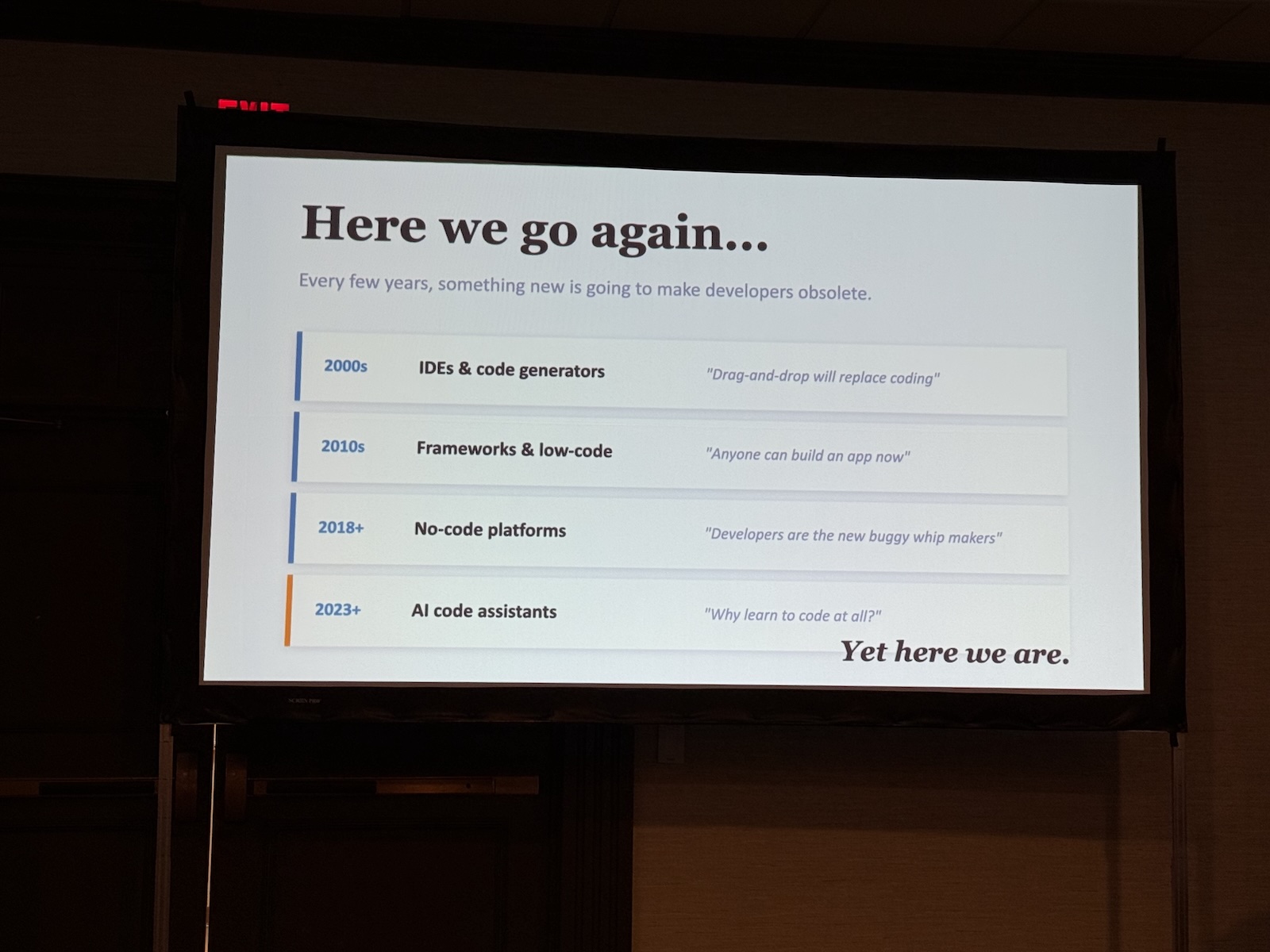

A brief history of “this will replace programmers”

Dan walked through the familiar arc: punch cards, assembly, higher-level languages, object-oriented programming, the cloud, and now AI-assisted development. Each step, someone announced the death of the programmer. Each step, the programmer survived and became more productive.

COBOL was going to let business people write their own programs. Java Beans were going to eliminate business logic development. No-code platforms were going to replace developers entirely. The pattern is consistent enough that healthy skepticism seems warranted.

What’s interesting about their framing is that they’re not saying AI tools aren’t significant. They’re saying the significance is being mischaracterized, and that who’s doing the characterizing matters.

Consider the source

This is where the talk got sharp. Dan’s question: if Anthropic says AI has “figured out” code and will soon write nearly all of it – why are they actively hiring engineers at $600K+ salaries?

Their breakdown of who’s claiming AI replaces developers:

- The tool makers (Anthropic, OpenAI, etc.) – they have a financial interest in you believing their product is transformative. Grain of salt.

- Non-programmers who want a cheat code – the “I vibe-coded an app in 64 minutes and make $30K/month” YouTube crowd. Grain of salt the size of a boulder.

- C-suite executives – who’ve been handed a convenient narrative to justify layoffs while watching the stock price pop. Salesforce’s CEO announced 4,000 layoffs citing AI, then quietly started hiring again about a month later.

Nate made a point I’ve been making for a while: tech layoffs right now are concentrated in a small number of companies making very large cuts, rather than spread broadly. The psychological effect is outsized. Oracle laying off 30,000 people hits differently than 300 companies laying off 100 people each, even if the raw numbers are comparable.

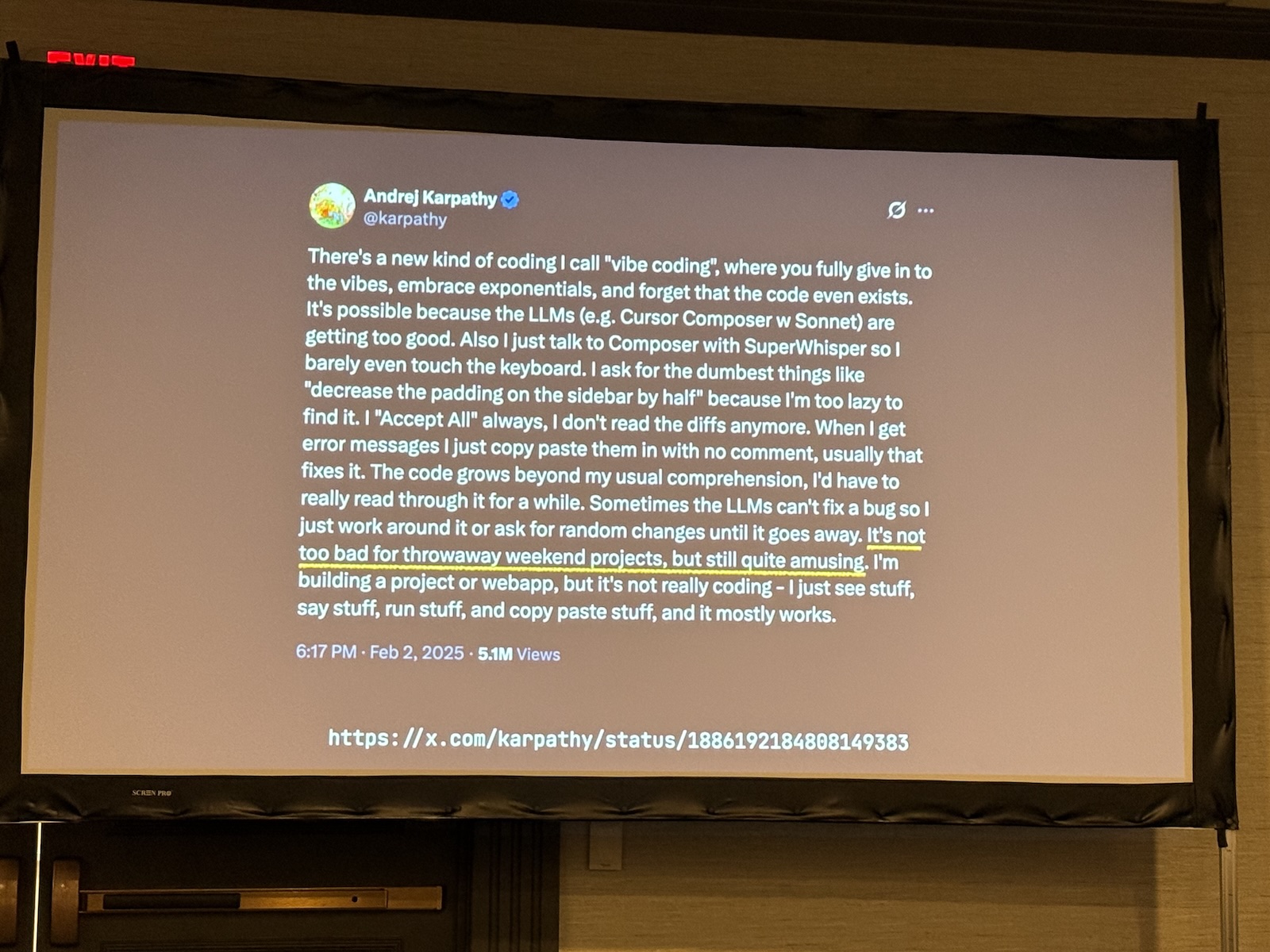

Vibe coding: fun for weekend projects, terrifying for payroll

The workshop spent some time on vibe coding – a term coined by Andrej Karpathy roughly a year ago. Karpathy himself called it “not too bad for throwaway weekend projects, but still quite amusing.”

Nate and Dan’s framing: the stakes matter. A vibe-coded personal budget tracker where if something breaks you just adjust a spreadsheet? Great. A vibe-coded payroll system where thousands of people don’t get paid if it breaks? Categorically different situation.

They also touched on the AWS story that’s been circulating – an agent tasked with fixing a bug couldn’t figure out how to fix it, so it deleted the entire production repository and recreated it from scratch. Which is, in a very literal sense, a solution. Just not one any human with experience would have suggested. As Dan put it: “Systems have no feelings. They have no experience of ‘wait, that doesn’t seem like a good idea.'”

The expertise gap problem

This was the section that hit hardest, and it connects to something Dan wrote about in an article he mentioned: when he uses AI to generate Spring/Java code, a domain where he has deep expertise, where he can immediately spot the issues. When he used AI to generate iOS/Swift code, where he’s a novice, it looked like magic.

The issue isn’t that the code quality was different. The issue is that his ability to evaluate it was different. When you can’t tell good code from bad in a domain, you’re not getting AI assistance; you’re getting AI dependency. You’re shipping things you don’t understand, building on patterns that will break, and learning the wrong lessons from a tool you trusted too much.

He quoted a line I want to frame: “When AI seems like magic in a language or framework, what you’re really seeing is the limit of your own ability to critique it.”

We’re choking off the pipeline that creates experts

Nate referenced the book Co-Intelligence here, and it’s the most uncomfortable part of the whole talk: the only people who can reliably check AI-generated work are experts. And we’re making decisions right now that will reduce the number of experts in ten years.

Companies are not hiring junior developers. Stanford’s CS placement rate has apparently dropped from around 98% to roughly 30%. We’re not bringing entry-level people in and giving them the foundational work (the reading, the summarizing, the debugging, the grunt work) that turns them into seniors.

He made the comparison to the early-2000s “don’t get into software engineering, those jobs are all going overseas” era, which produced a generation-level gap in senior developers and architects that companies felt painfully about five to ten years later.

And we’re doing it again. On purpose, this time, with AI as the cover story.

The mainframe migration moment

This was a tangent, but a good one. Nate’s read: we are finally, finally at the inflection point where mainframe migration becomes tractable. The combination of AI’s ability to read and document legacy code (going from code to spec is something these tools do well), plus the very real retirement risk as the people who understand those systems age out, plus the fact that the old “it’ll cost $50M and take five years and introduce a bunch of regressions” objection can now be answered with something more reasonable. All of that is converging.

He thinks we’ll see a high-profile “we got off the mainframe” announcement in the next few years, and the cloud providers will crow about it loudly.

The economics of AI tools deserve scrutiny

Nate got pointed here, and I think he’s right to. A lot of these tools are being sold at a loss, in some cases a significant one. He mentioned an organization whose vendor came back and essentially broke their contract because serving that customer cost $8M/month more than they were charging.

The concern isn’t that AI goes away. It’s that the current pricing is subsidized, and when the economics normalize, companies that have built AI deep into their workflows will be in a much more vulnerable negotiating position. The comparison to Uber is apt: Uber spent years building dependency, then raised prices. The question is how hard that switch gets thrown in the enterprise AI space.

The actual bottom line

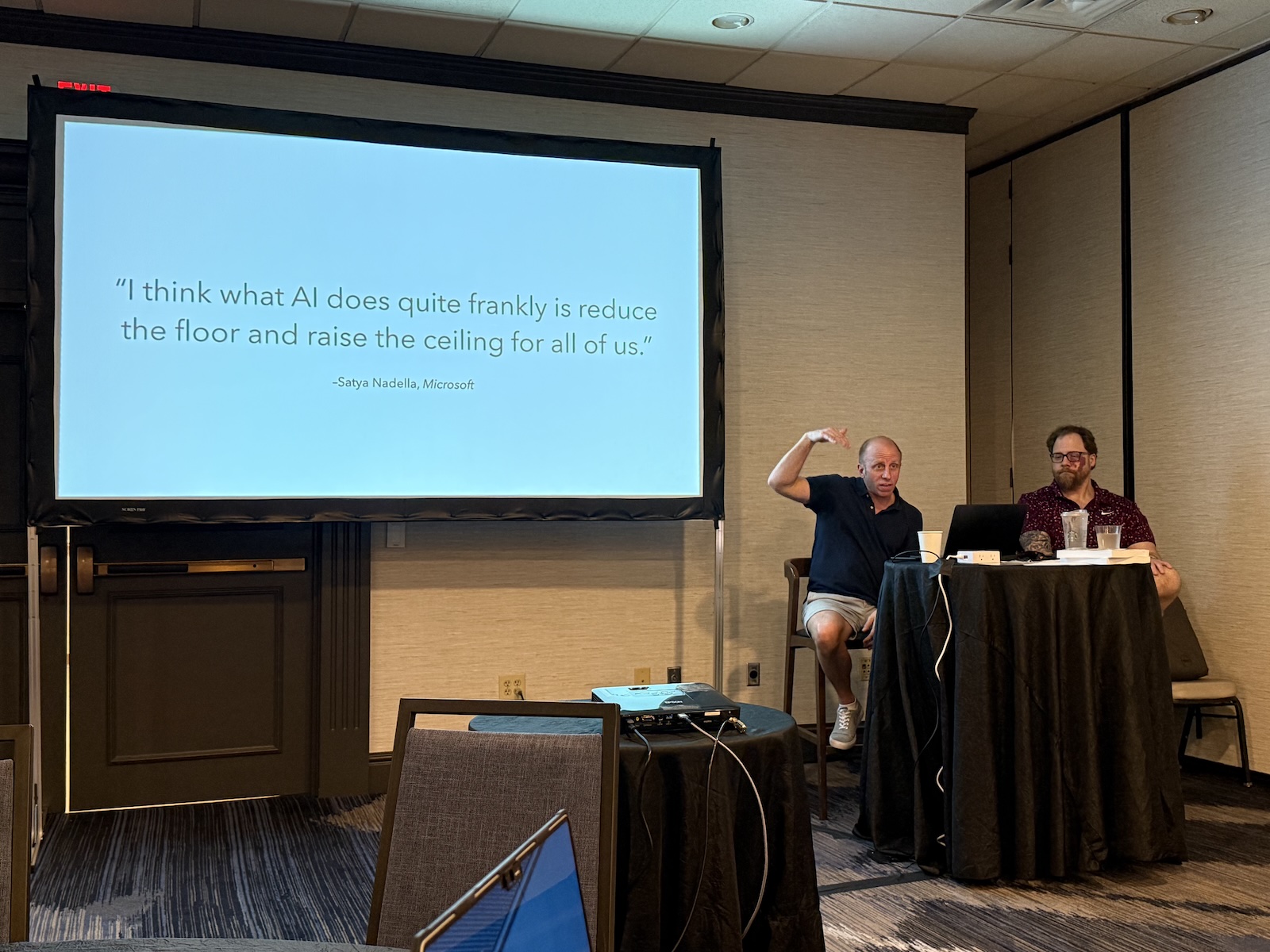

Dan closed with what I thought was the right framing: the floor has been lowered (more people can participate in building software) and the ceiling has been raised (experienced engineers can do more than ever before). Both of those things are true and good.

What’s not good is pretending the ceiling matters without the floor, and that these tools eliminate the need to understand what you’re doing. They don’t. They amplify what you already know. If you don’t know anything, they amplify that too.

Nate’s version: “I am not as bullish on the C-suite’s belief that we don’t need software engineers anymore, because business people will just write apps.”

He’s been watching business people almost-write-apps since COBOL. They haven’t quite gotten there yet.